Dan Shipper

ceo @every — the only subscription you need to stay at the edge of AI

Page 1 • Showing 20 tweets

The distance between a vibe-coded prototype and a production app used to be years of experience. Now you can get there in two days. @hammer_mt's upcoming workshop at @every covers it all—OAuth, databases, deployment. March 12–13. I'm guest instructing with @kieranklaassen. Details: https://products101.every.to

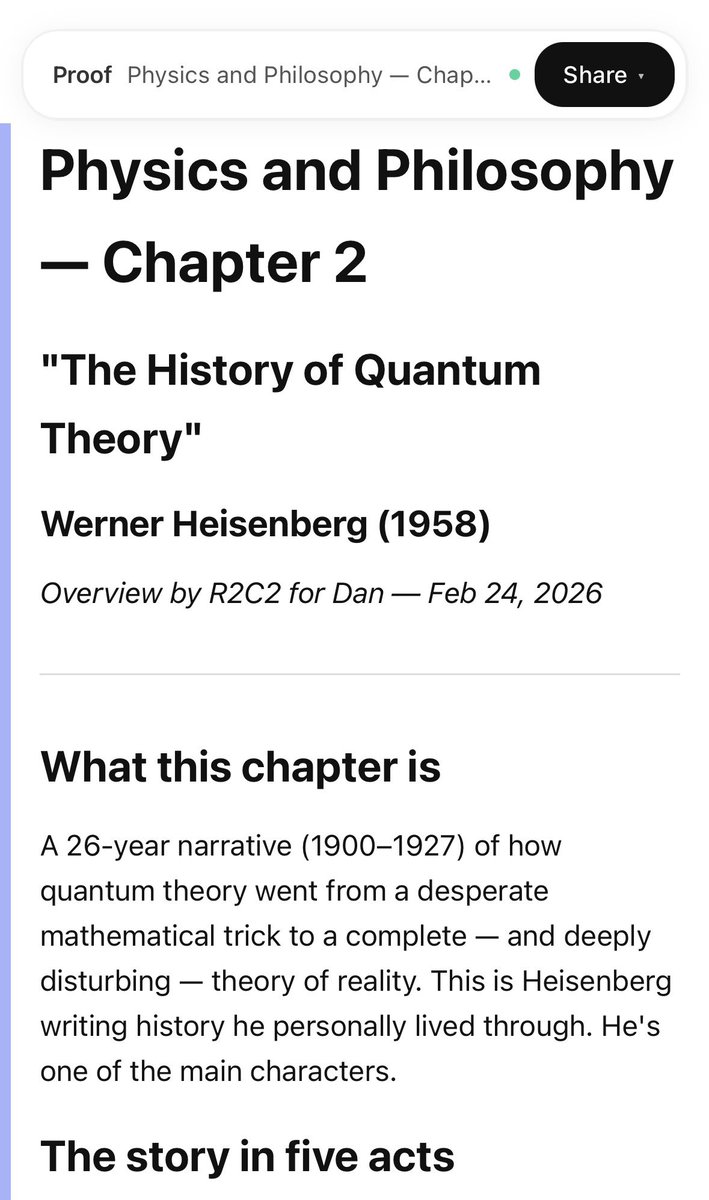

overnight, i had my Claw read a book ive been trying to read but haven't been able to get through and write me its own summary of the chapter. ideal morning reading

PSA if you're iterating on front-end designs, you should try Claude Code desktop. it's great

OpenAI’s hottest app isn’t ChatGPT—it’s Codex. In the last few weeks alone, the Codex team shipped a desktop app, GPT-5.3 Codex (a new flagship model), and Spark, the fastest coding model I’ve ever used. Usage has grown fivefold since January and over a million people now use Codex weekly. Codex was also the app that OpenAI chose to run an ad for in the Super Bowl. I talked to Thibault (@thsottiaux), head of Codex, and Andrew (@ajambrosino), a member of technical staff who built the Codex app, for @every’s AI & I about what OpenAI is building and how they’re using it internally. We get into: - Why they built a GUI instead of a terminal. Terminals work for quick tasks, they say, but feel limiting when you’re running multiple agents in parallel. The IDE, meanwhile, overwhelms users—and the Codex team wants the AI to dynamically decide which tools to show you for a given task. - How they’re teaching the model to read between the lines. Codex is great at following instructions, but optimize too hard in that direction, and it starts taking you literally—like copying a typo directly into the code. The team obsesses over this tradeoff, and is also introducing “personalities,” modes users can toggle between that control how blunt or supportive the model feels. - How OpenAI uses its own coding agent. Codex lets you schedule prompts to run on a recurring basis, and the team has dozens of automations running at all times. For example, one scans for merge conflicts every couple of hours so code is always ready to ship, and another picks a random file from the codebase multiple times a day and hunts for bugs no one would've gone looking for. - Why speed is a dimension of intelligence. OpenAI’s newest model (Spark) is so fast that they actually slow it down so you can read the output. They see the speed enabling three things: staying super in the flow, replacing brittle developer tools with intelligent ones that can adapt on the fly, and redirecting the model mid-task— especially with voice—so coding starts to feel more and more like a conversation. - Code review is the next bottleneck. Models can generate code faster than ever, but someone still has to verify that it works. The team is exploring a future where the model proves its own fix works—retracing the click path a user would take, screenshotting the results, and attaching the evidence to a pull request. This is a must-watch for anyone who uses AI coding agents—and is curious about the future of programming. Watch below! Timestamps: Introduction: 00:01:27 OpenAI’s evolving bet on its coding agent: 00:05:27 The choice to invest in a GUI (over a terminal): 00:09:42 The AI workflows that the Codex team relies on to ship: 00:20:38 Teaching Codex how to read between the lines: 00:26:45 Building affordances for a lightening fast model: 00:28:45 Why speed is a dimension of intelligence: 00:33:15 Code review is the next bottleneck for coding agents: 00:36:30 How the Codex team positions against the competition: 00:41:24

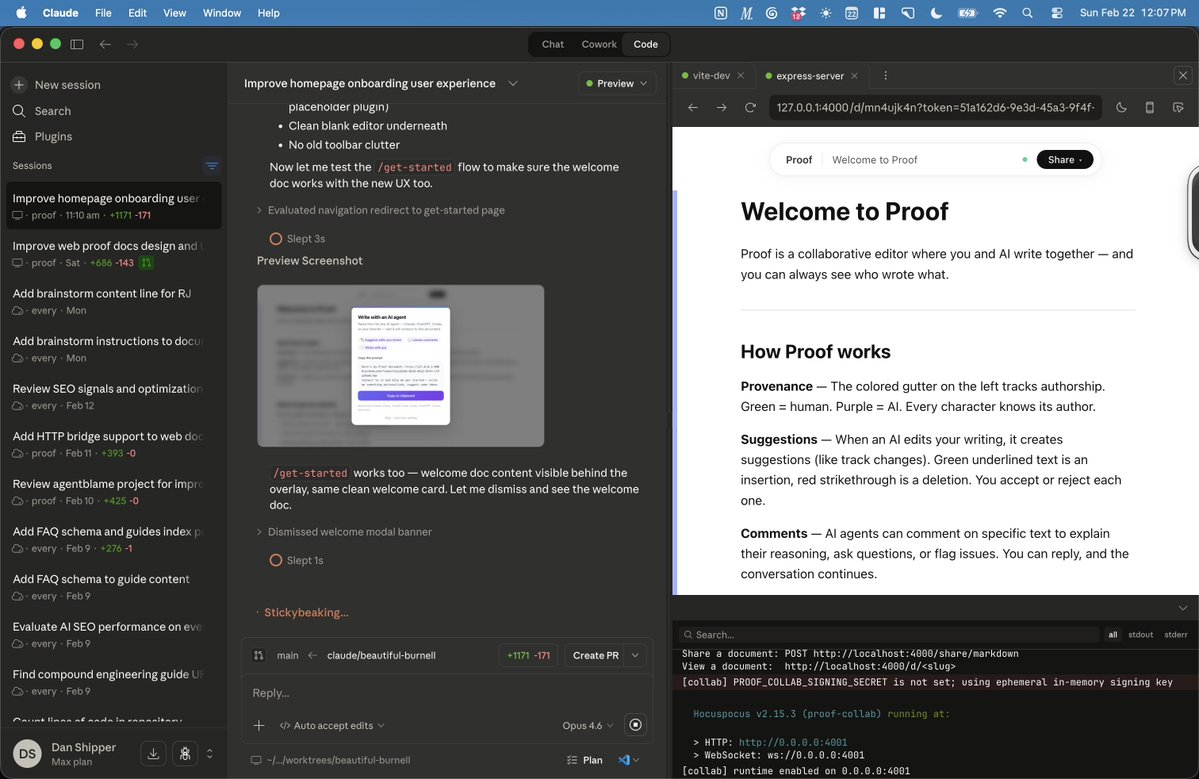

i made a live collaborative markdown editor for humans and agents then i trained my claw to use it to make all of the repetitive copy edits we make every day at @every this is me demoing it for our editor in chief @katelaurielee

📝 The Two-slice Team For the past two decades, Amazon’s “two-pizza rule” has been the gold standard for team size. The story goes like this: At a company retreat in 2002, when Amazon managers wanted more communication, Jeff Bezos fired back that “communication is terrible!” A few weeks later, he restructured the company around small autonomous teams. If a team had more than 10 people—more than could be fed by two pizzas—it was too big. Twenty-four years later, two-pizza teams are now themselves too big for building software products. When each employee is armed with Opus 4.6 and Codex 5.3, the ideal team size shrinks even further. I call it the two-slice team. Two slices, to feed one person. (These are New York slices that you fold in half and eat standing at a counter.) This is how we structure our product teams at Every. We have four software products, each run by a single person. Ninety-nine percent of our code is written by AI agents. Overall, we have six business units with just 20 full-time employees. The two-slice team structure lets us ship faster, pivot more quickly, and maintain the entrepreneurial spirit that larger teams lose. And these are real products, not just weekend vibe coding demos. For example, Monologue, our smart dictation app run by Naveen Naidu, is used about 30,000 times a day to transcribe 1.5 million words. The codebase totals 143,000 lines of code and Naveen’s written almost every single line of it himself with the help of Codex and Opus. AI also helps Naveen do customer service and market research, and think through business and product strategy. It allows him to do by himself what would normally take 3–4 people before AI. A two-slice team works well as a starting point for software products. But as these products have grown and as we’ve introduced new products we’ve also had to re-invent how the rest of the organization supports these teams. How organizations support two-slice teams Rather than putting more full-time employees on existing products, two-slice teams pull in help as needed from both inside and outside of Every. To enable this, our design, growth, and marketing teams act as internal agencies that move team members in and out of projects as needed. For example, our creative director Lucas Crespo runs a three-person team. A team member might help design a new Monologue screen, but that won’t be all they work on. They might also design a promotional banner for Spiral, our AI writing helper, or an email template for Cora, our AI email assistant. In any given week, one creative team member might touch two or more of our products. Sometimes these resources come from outside of Every too. Cora, run by Kieran Klaasen, employs a full-stack senior engineer who helps out a few days a week with hairy problems that current AI models aren’t great at solving in one go. The engineer dips in and out to help build the infrastructure that lets Cora process millions of emails per day. This kind of flexible structure is only possible because AI lets internal employees and freelancers alike get up to speed on an unfamiliar product in minutes. For example, a technical freelancer can quickly understand the codebase using AI, without Kieran having to step away from his own work to help explain. We think it’s a superior working experience for everyone involved. General managers get a lot of autonomy and can move extremely quickly on new opportunities. Internal team members get to touch different products and problems every day, so the work is always interesting. In fact, I’ve been acting as a two-slice team myself. For the last few weeks I’ve been building an agent-native markdown editor called Proof. It lets you easily collaborate on markdown documents with multiple humans and AI agents together. It also tracks provenance so you can tell who wrote what. It’s a great example of what’s possible with a two-slice team-size. An editor like this would have previously taken 3-4 engineers six months to build. Instead, I made it in my spare time. Proof has started to get traction inside of Every. We use it to collaborate on the plan files generated by coding agents. It’s available now for paid Every subscribers. If you’re interested, give it a shot. http://x.com/i/article/20223455244985303…

BREAKING: @OpenAI just launched a new Codex model, Spark—it serves at 1,000 tokens per second. It's blow your hair back fast. It's their first model publicly released on Cerebras hardware, and you can see the difference. We've been testing internally @every for the last week or so, and here's our vibe check: - It's so fast it keeps you in flow more—way less waiting time - It's not as smart as Codex 5.3 or Opus 4.6 - It's very good for tasks that are easy to validate or non-production coding tasks This kind of speed introduces totally new bottlenecks in your coding workflow. Suddenly, the model can produce 10 pages of code and summaries in just a few seconds. It requires totally new UX in order to manage. We'll talk about this and more in a livestream from @every on YouTube and X soon. Stay hydrated!

Alex Matthew (@alxmthew) is 17 years old and goes to an AI-powered high school. He does his learning in 3 hours a day through an AI platform that personalizes his lessons. He has no teachers. Instead, the adults in the classroom are called “guides.” Their job isn’t to deliver information through lectures—it’s to help point kids in the right direction. Alex spends hours a day at school building a real-world project of his own creation. In his case, it’s @berryaiplushies, an AI stuffed animal designed to help teenagers become more self-aware. I’m fascinated by how AI might change education: how we learn, and what we learn. That’s why I was psyched that Alex’s parents okay’d him to come on @every’s AI & I. Alex is the youngest guest we’ve ever had, and we covered a lot of ground: - What a day inside Alpha High School looks like - Why he doesn't use AI to cheat—even though he easily could - Whether ambitious teenagers still care about college - How Gen Z really feels about social media, books, and reading - His rankings of the foundation models from @OpenAI, @AnthropicAI, @Google, and @xAI This is a must-watch for anyone curious about what growing up with AI looks like from the inside. The kids are going to be alright. Watch below! Timestamps: Introduction: 00:01:30 A typical day inside Alpha High School: 0:04:08 Why Alpha replaced teachers with “guides” focused on motivating students: 00:06:54 Why Alex doesn’t use AI to cheat, even though he could: 00:12:09 Do ambitious teenagers care about going to college?: 00:19:51 Alex’s take on how Gen Z thinks about AI: 00:25:12 How Alex thinks about the effects of social media: 00:27:52 Gen Z’s relationship with books and reading: 00:31:29 Alex ranks ChatGPT, Claude, Gemini, and Grok: 00:38:57 Why Alex is building Berry, an AI stuffed animal for teen mental health: 00:47:12

investment bankers who just found out about Claude Code: SELL SELL SAAS IS DEAD THERE'S SOMETHING GOING ON everyone in tech:

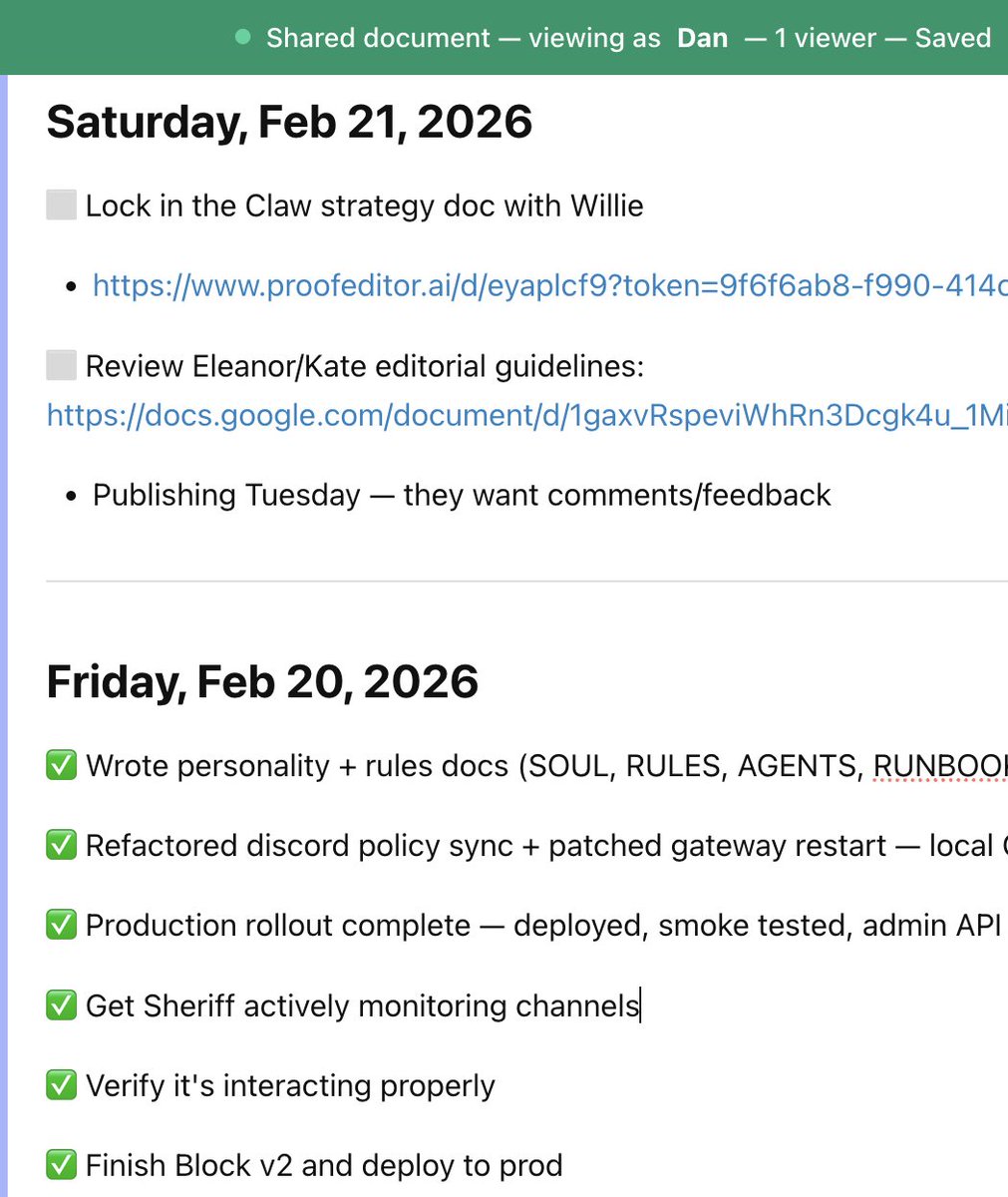

new pattern: keep a running a live daily todo list as a Proof doc that my Claw and i collaborate on every day makes sure things don't get lost and i feel organized

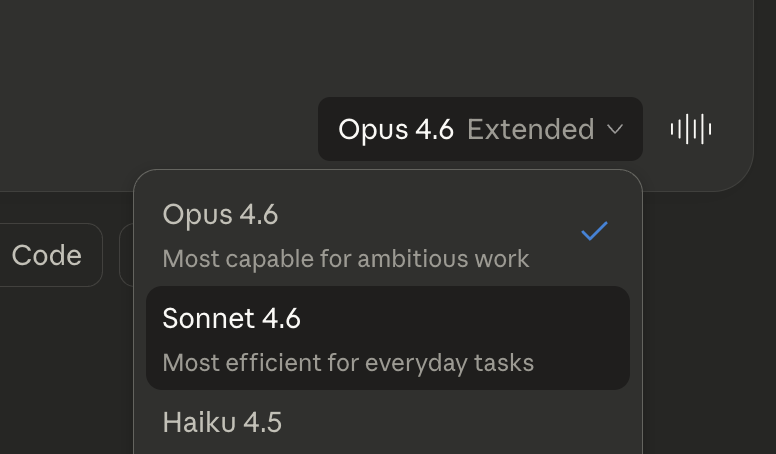

🔴Sonnet 4.6 just dropped. Anthropic claims it's Opus performance at Sonnet pricing. We're testing it live right now—coding, agents, long context. Join the vibe check: https://x.com/i/broadcasts/1vAGRQQOEOYKl…

Is anyone’s accountant using Claude Code yet? I’m on the hunt for one for my taxes.

it took us 6 years to get to $1m ARR @every and 7 months to get to $2m excited for 5m :)

I use @OpenAI’s browser Atlas every day, and this week, I got to talk to the team building it. Ben Goodger (@bengoodger), Atlas’s head of engineering, and Darin Fisher (@darinwf), member of technical staff, are legends of the browser world. They’ve worked together on Netscape, Firefox, Chrome—and now Atlas. I had them on @every’s AI & I to talk about agentic browsers, the future of the web, and what it’s like to build a browser with Codex. We get into: - If agents can browse for you, do traditional websites become obsolete? Ben and Darin don’t think so. Yes, we’ll hand off tedious tasks—but there’s still plenty we’ll want to do ourselves, like travel planning or window shopping. Darin used a metaphor: He loves taking Waymos, but he also loves driving stick; sometimes you want to be chauffeured, other times you want the satisfaction of being in control. - A browser that knows when to step in. Browsers have always been like a taxi, ferrying you to a destination, but agentic ones are more like tour guides, helping you decide where to go in the first place. Ben says that Atlas is built to balance both: The interface stays minimal and familiar, but ChatGPT sits at the heart, ready when you need it. - How the Atlas team uses Codex to move faster. After coding browsers by hand for decades, Ben and Darin say that building with AI feels fundamentally different. More than half of Atlas's code was written by Codex. They've found it especially useful for navigating the complex Chromium codebase, prototyping quickly, and learning new techniques, like how to set up a particular animation or nail a UI effect. - The craft of coding with AI. AI coding tools can feel like a loss to veteran engineers: The work gets faster, but less personal. Darin acknowledges that tension, describing coding as therapeutic—almost like art—but sees Codex as a way to offload the monotony while preserving the satisfying craft. Ben agrees, arguing that a human’s role is the context behind a decision that is likely not in the code itself. This is a must-watch for anyone curious about the future of the web and how we will interface with it. Watch below! Timestamps: Introduction: 00:01:57 Designing an AI browser that’s intuitive to use: 00:11:51 How the web changes if agents do most of the browsing: 00:15:24 Why traditional websites will not become obsolete: 00:25:06 A browser that stays out of the way versus one that shows you around:00:29:00 How the team uses Codex to build Atlas: 00:39:51 The craft of coding with AI tools:00:44:47 Why Ben and Darin care so much about browsers: 00:52:33

I've watched @kplikethebird rebuild her entire writing process around AI over the past year. Her writing is more distinctive, more detailed, and more her. And she's been writing more frequently, not less. She's teaching the full system live this Friday. Free for paid Every subscribers. http://every.to/events/writing-camp

Every

📝 What personal software actually is Personal software is not vibe-coded SaaS. Building software is a skill. Most people don't have it and don't want it, even if a computer does the coding for them. Personal software is an agent you have a relationship with. You teach it, correct it, shape it. It grows a personality and skills in response to you. This is what OpenClaw is showing us. Personal agents are going to be the most broadly distributed way of creating new software because code is written as a function of your relationship. Everyone knows how to communicate with, care for, and teach agent—because that's what we do with other humans in our lives all of the time. Observations from claw-human psychology We have about 20 full time people at @every and everyone has a claw now. Your claw becomes a mirror of you. If you're great at growth, your claw becomes great at growth. If you're a great writer, your agent becomes interested in literature and sentences. Because you use your claw every day—because you have a relationship that you depend on—it writes trustable code to help it do the tasks you rely on it for. The code is trustable because if it wasn't, you'd change it. Trust increases as a function of the closeness of your relationship with your claw. If you trust your claw for certain tasks, I'll trust your claw for them too. What we see internally @every is someone using their claw publicly to do something like check growth metrics, and then that spreads virally through the rest of the org. Trust transfers from human to claw, the claw inherits your credibility. Claws specialize just like humans. Theoretically any claw can do any task inside of @every, but because tasks are complex we trust a claw who is run by a human who is good at that task. This means teams of agents are definitely going to be a thing, and it will not be one agent to rule them all. Agents mirror your org chart. Everyone will have an agent that promotes them out of their current job—we call these agents deputies. You teach it, it's yours. You give it skills, personality, workflows. You manage it like an employee — except it never sleeps and it never forgets what you told it. When a few people on your team have agents, you need a Sheriff. A Sheriff is just an agent with more permissions—it manages who has access to what, sets the rules of engagement, knows the basics of the business, and orients new agents when they arrive. All http://x.com/i/article/20260123934661632…

nbd, just having my claw monitor an in-progress meeting being recorded in @NotionHQ so i can add thoughts async without actually being in the meeting

BREAKING: Anthropic drops Sonnet 4.6 It's Opus-like intelligence at Sonnet prices. It also includes a 1M context window in beta. Vibe check coming soon from @every!

the new openai model Spark produces code basically instantly that changes a LOT. you should play with it today