Deedy

VC at @MenloVentures. Formerly founding team @glean, @Google Search. @Cornell CS. Tweets about tech, immigration, India, fitness and search.

Page 1 • Showing 20 tweets

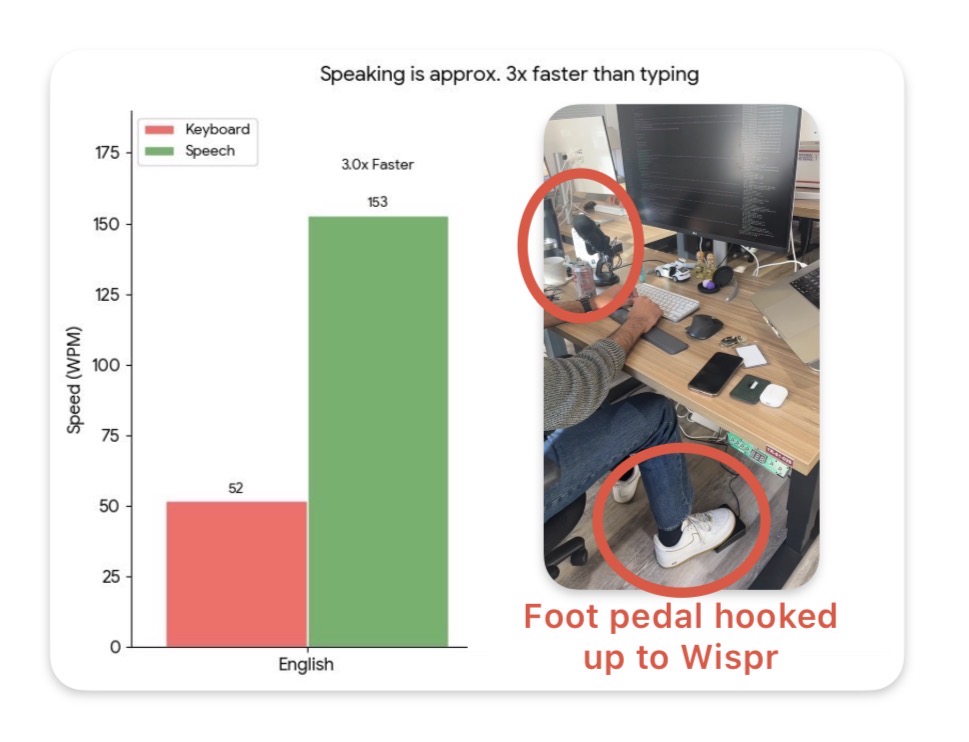

Voice is ~3x faster than typing on mobile and desktop, but dictation accuracy always sucked. Now, products like Wispr boast a ~85% "zero edit rate" and is my daily driver for zipping through Slack/email/text responses on my walks back home. And its slowly becoming omnipresent in the wild too. At a random startup office I visited last month, I saw this really cool set up where an engineer hooked up this foot pedal to trigger Wispr! They just launched on Android too and are giving away six months for free:

I was wrong about Sarvam. When I wrote about them a year ago, I felt like the direction to train small "indic" language models was wrong. But boy, have they turned it around. They have the best text-to-speech, speech-to text, and OCR models for Indic languages, and that's actually really valuable. The pricing is very reasonable. And the website is not only beautifully designed but dirt easy to use. They're filling a well needed gap in the ecosystem and doing things big labs will probably never focus on to the fullest extent (at least in the short term). I don't know anything about the business, but there's a lot to appreciate about what they've build technologically and I can't remember the last time I felt that way about software products coming out of India. Well done.

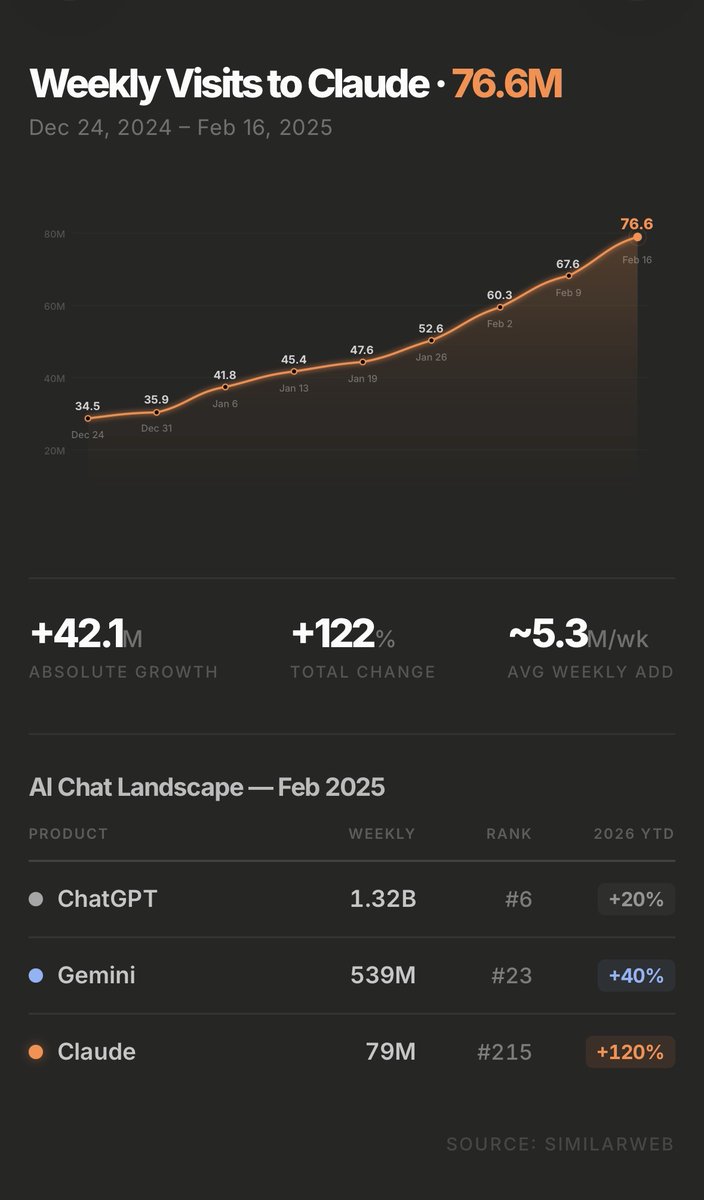

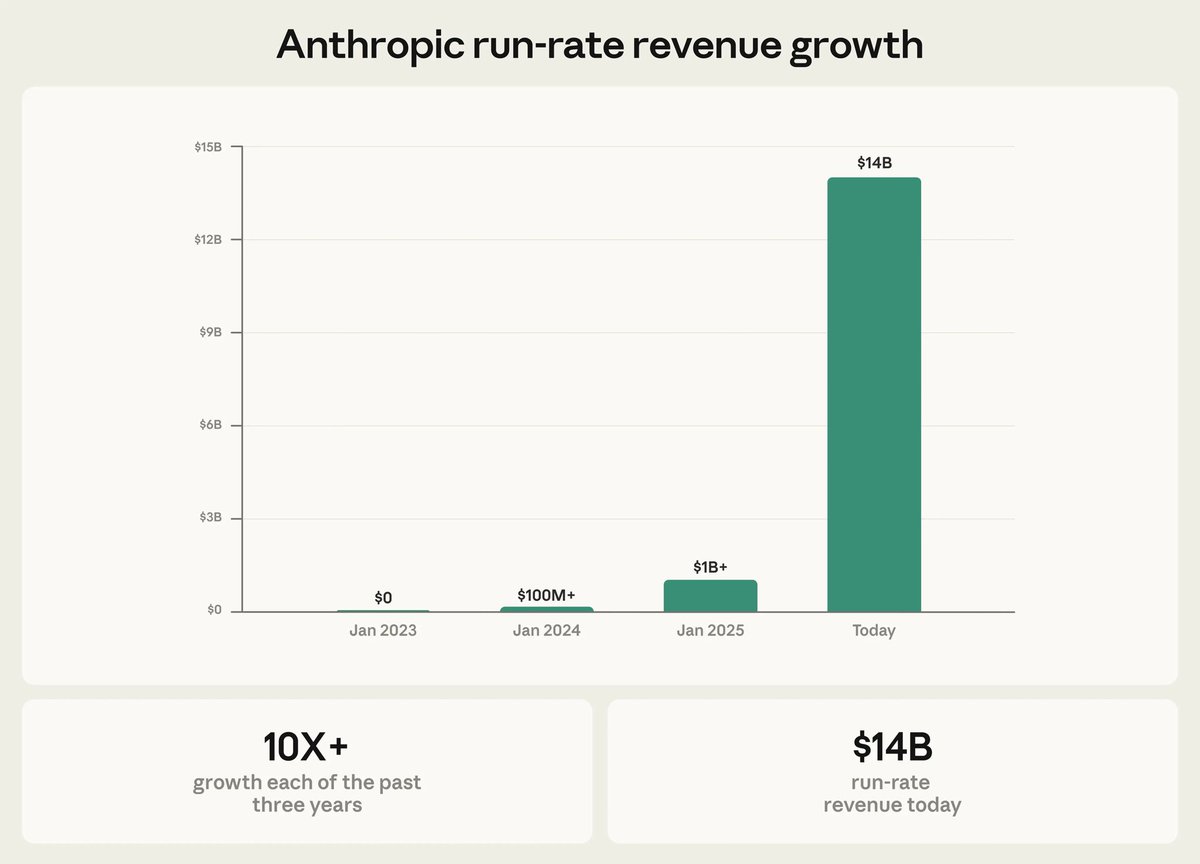

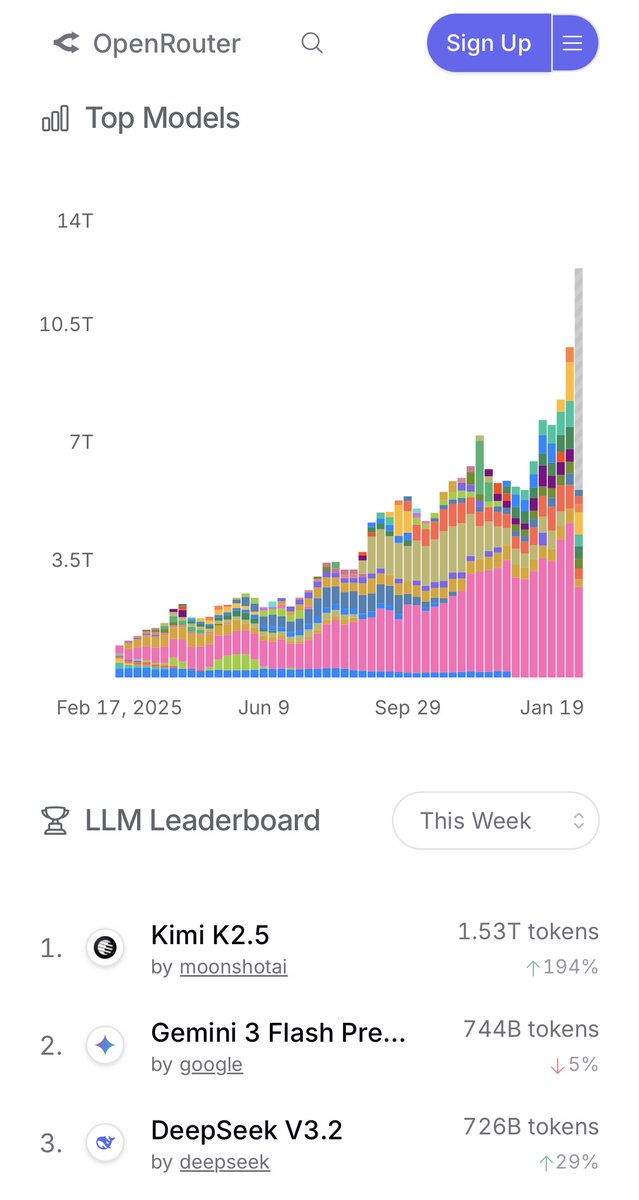

2026 has been a generational year for us at Menlo already. — Anthropic is the fastest growing company of all time adding $4.5B run rate in 42 days after the $380B round. We put ~$1B into it starting from the Series C — Suno reaches 100M users and $300M ARR — Lovable is the 5th most adopted and 2nd fastest growing AI vendor, going 0 to $200M in a yr — OpenRouter grew 2.5x in 1.5 months. On track to 1 quadrillion token annual run rate. — Higgsfield hits $200M run rate with creative tools and a $1B+ valuation. — Wispr Flow continues to grow 40% MoM with a 70% 1 year retention and wins some massive enterprise contracts — Clerk becomes #4 fastest growing vendor in the league of Google, Atlassian and Replit — Inception launches the first and best reasoning diffusion model that is the fastest for its intelligence at 1000tokens/s — Goodfire, Anthropic's first direct investment, hits $1B+ val and discovers novel biomarkers for Alzheimer's Most VCs don't believe in this model of being picky, low volume investors. We do very few investments (up to 2/partner/yr) and we go early. 5 of these were partnerships since the Seed. It's been working for us so far (even though we've missed a lot too!) It's an privilege to work with founders who run through walls and take on so much risk to bring new things into the world. And we're very lucky to play a small part in that! Still a lot of work to do.

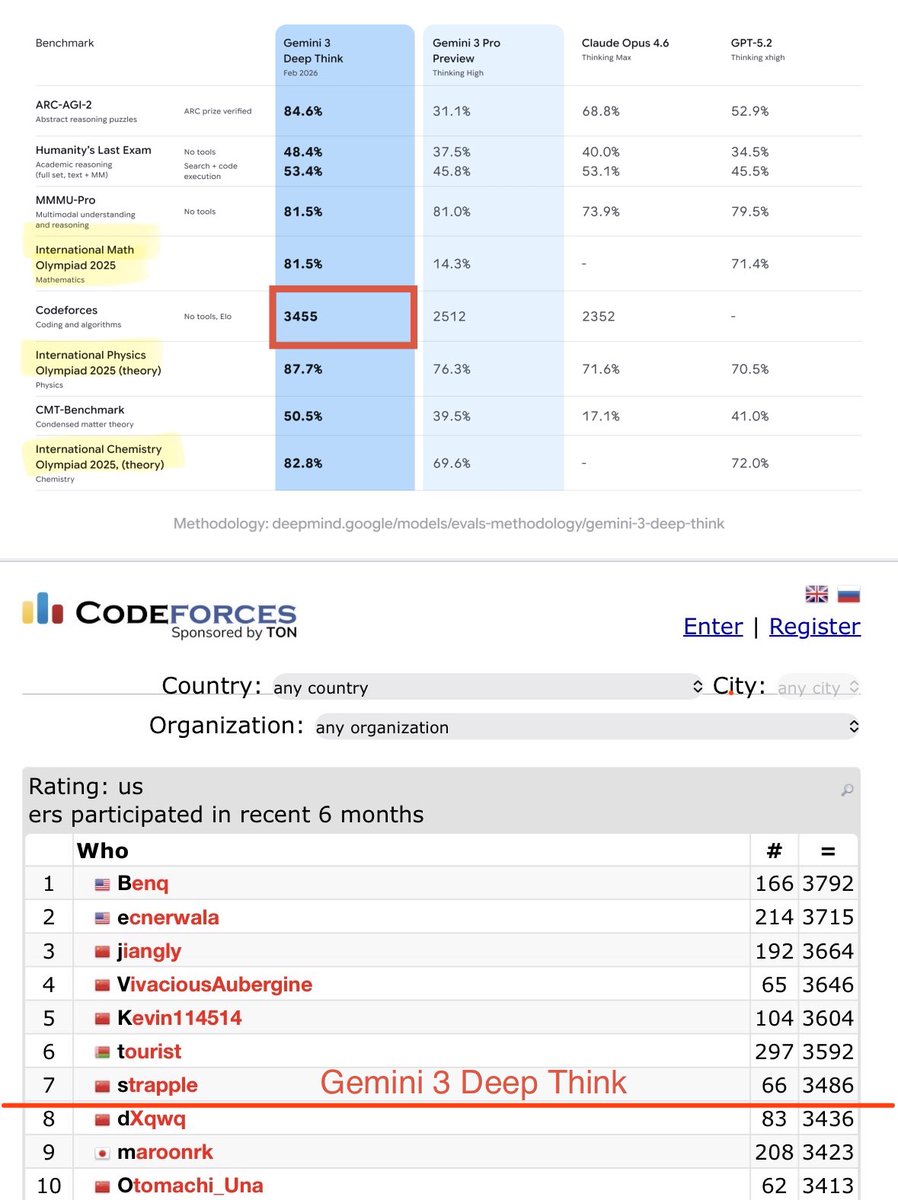

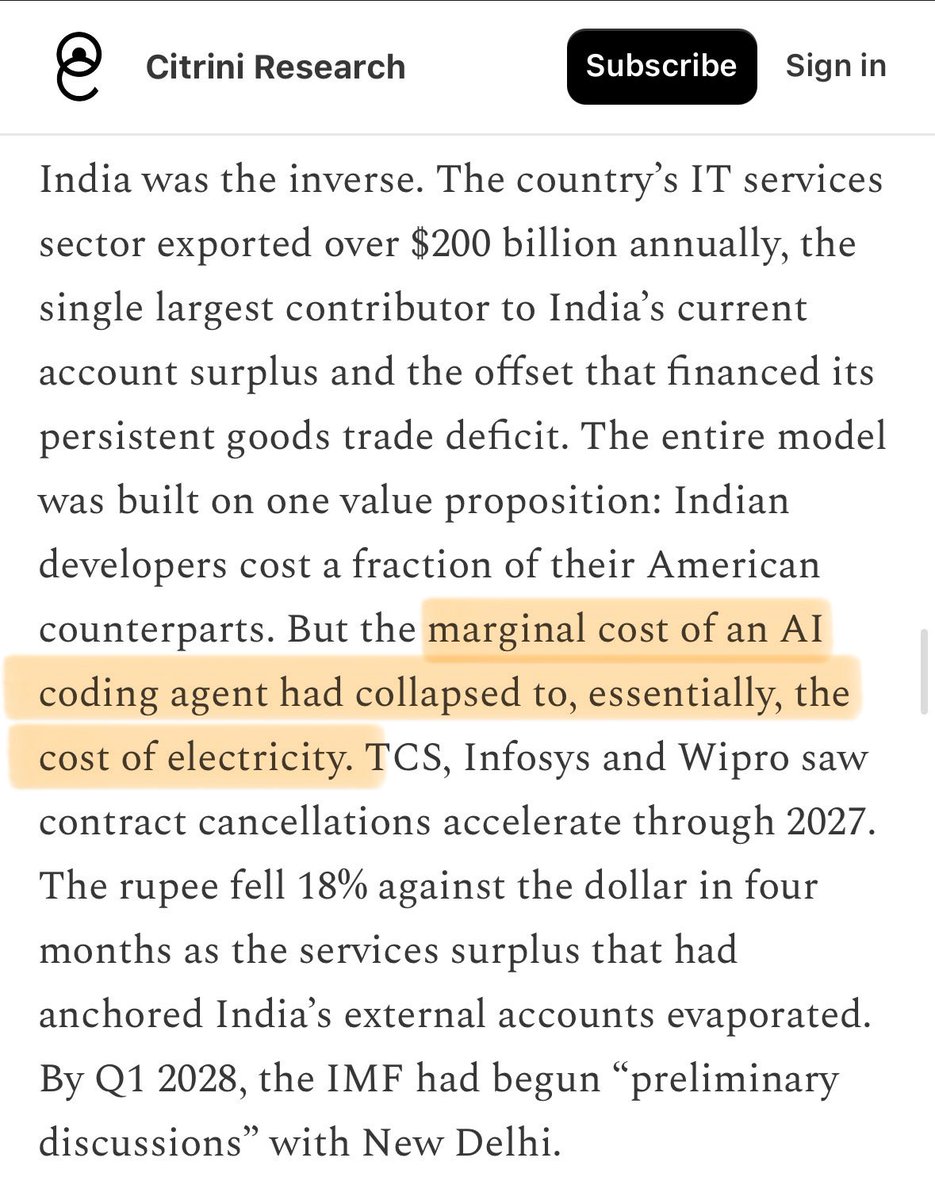

$50B of Indian IT services market value was eroded in the last 30 days. The Citrini article predicts it will collapse even more. Niftya IT index: -15% Wipro: -25% Infosys: -25% TCS: -17% Cognizant: -24% HCL: -17% Accenture: -25% Capgemini: -30% LTI Mindtree: -25% TechMahindra: -18% Mphasis: -20% Palantir claims it can compress complex SAP ERP migrations (ECC to S4) from years to 2 weeks. GCCs (companies owning their own offshore IT departments in India) with Claude Cowork are far more ecomical than multi year IT services contracts. I do think the 18% rupee collapse is exaggerated though. The IT services business model absolutely breaks at the current capability of AI tooling, and its ~10% of Indian GDP.

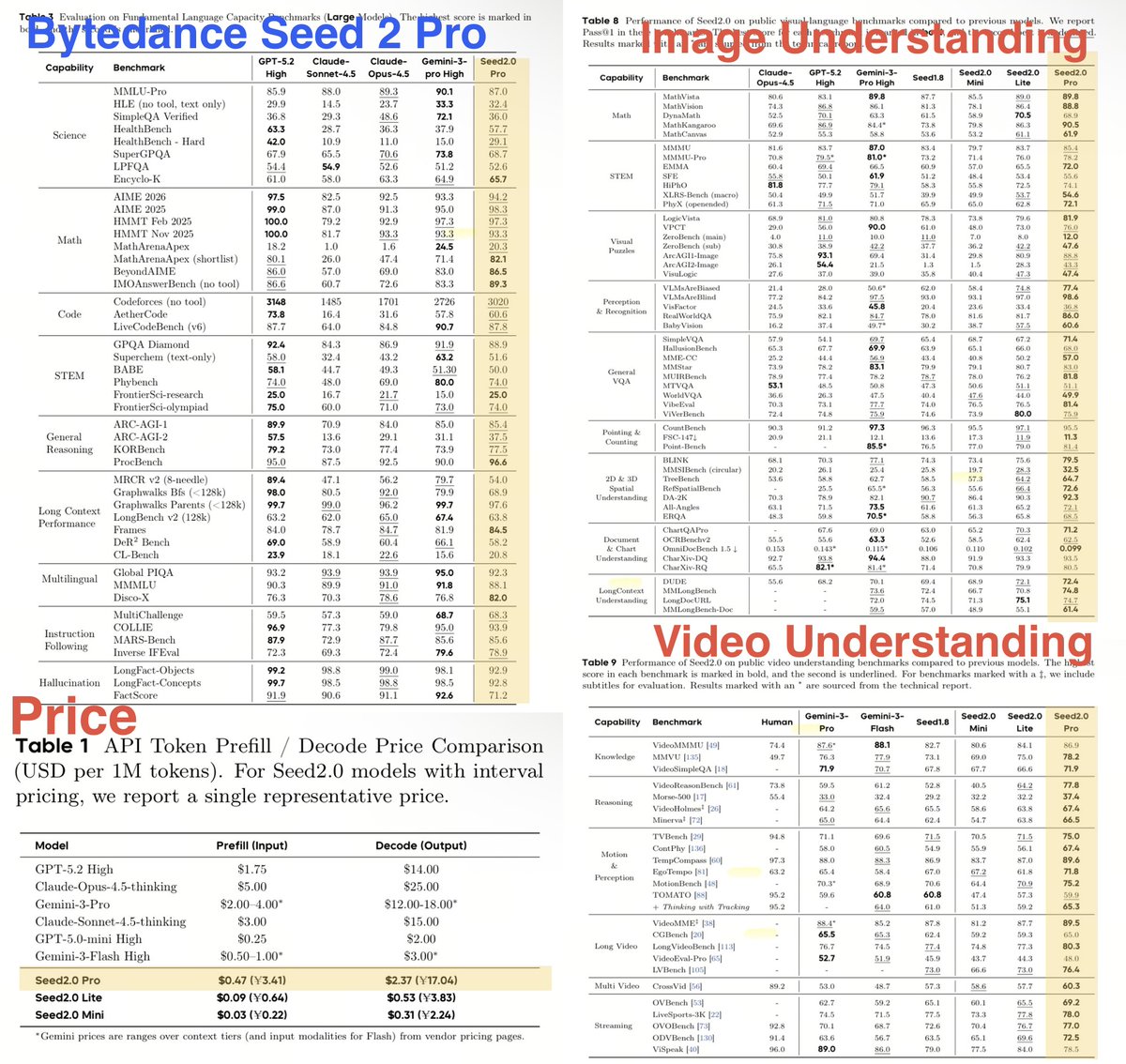

🚨This is actually the most mind-blowing Chinese LLM drop. Bytedance has done something special here. Seed 2 Pro crushes every single image and video understanding benchmark, a clear #1, while being near frontier on general Intelligence. All while being priced less than Gemini Flash: $0.47/M input, $2.37/M output. Chinese models are usually cheaper, benchmaxxed fast followers but rarely cutting edge. This is different. Now with the groundbreaking Seedance 2 for video, NanoBanana grade Seedream 5 and now Seed 2 Pro, it's clear that Bytedance has broken new ground in multimodal that US labs (OpenAI / Anthropic / DeepMind) have not!

Let's zoom out and think of everything AI has unlocked in the last 10 years. — We can now generate and edit Hollywood-grade video [Seedance 2], speech [ElevenLabs], music [Suno] and image [Nanobanana, Midjourney] — We have access to infinite senior software engineering code [5.3 Codex + 4.6 Opus] that is used to create these models too — We can simulate entirely new interactive worlds [Genie] and use those worlds to teach robots — We can do entire work tasks in English [Cowork] — We can solve competition level math and coding problems [many] and even unsolved math problems [AlphaEvolve] — We can write entire articles that persuade even elite industry veterans [ChatGPT] — We can ask deep questions or chat with a therapist [ChatGPT] — We solved the most complex games known to man: Poker, Go, Chess — We solved protein prediction [AlphaFold] If after this, someone says "but 95% of AI pilots fail", it's because they're using the wrong a bad product, an old product, or just haters. The pace of AI is far outpacing the pace at which our institutions are able to keep up, and society 10 years from now is going to look extremely different from today.

I used AI to bring back old scanned books in Hindi (and any other language) that were lost to history back to life. I brought back the prescient 1924 Hindi novel "Baeesween Sadi" (22nd Century) that predicts what life in the future might look like, 100+ yrs later. Indian author Rahul Sankrityayan had predicted things like video calls far before they were invented. How do you do it? Claude Code w/Opus 4.6 as the orchestrator, OCR by Sarvam [more on this later], GPT-4.1 as the translator, Gemini 3 Pro for illustrations. I've always wanted to bring back obscure literature from the past back and readable for everybody since I was a kid and I was gobsmacked that you can effectively achieve this with a few prompts *perfectly*. If there's anything in particular you want to be brought back to life, let me know and I'll front the bill to do it! Here is a link to the full PDF:

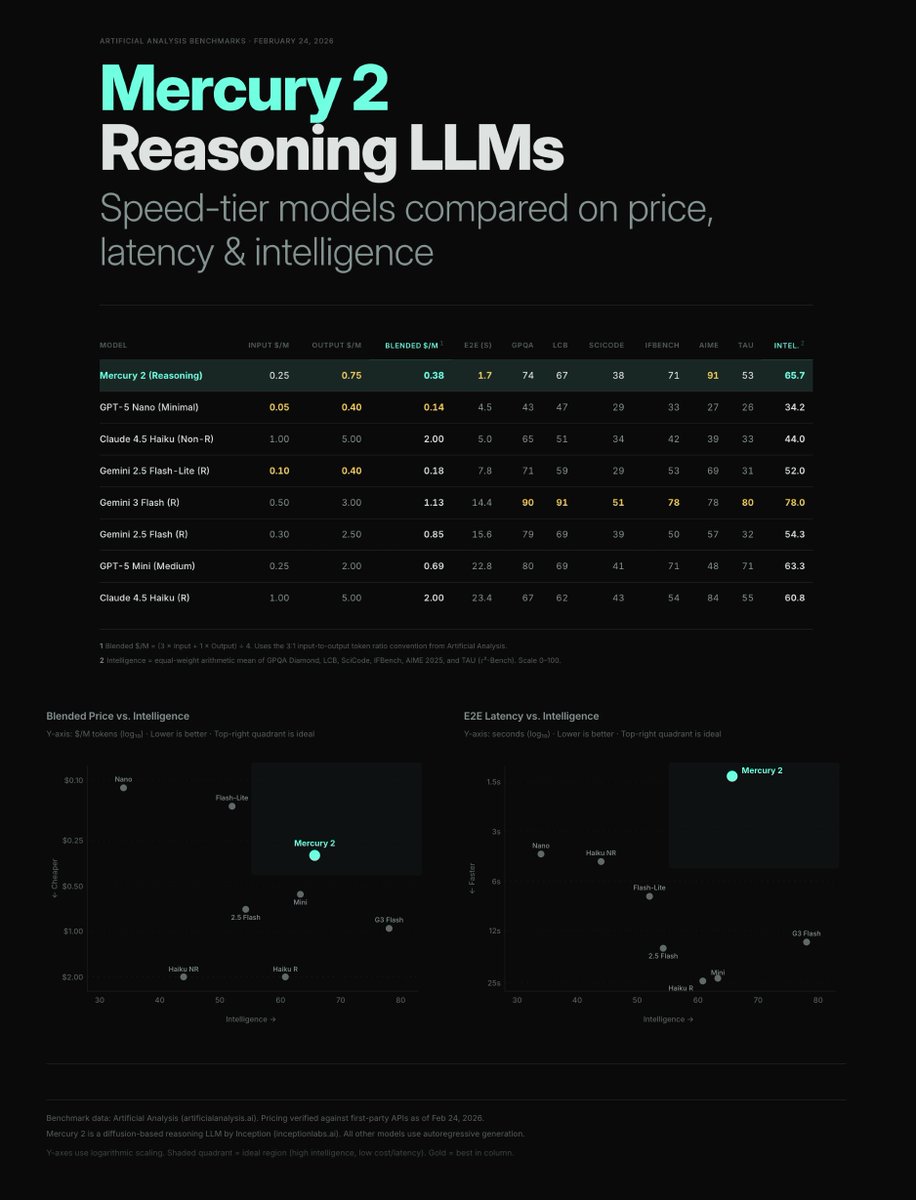

Inception Labs is the AI co everyone's sleeping on. 3 profs from Stanford, Cornell and UCLA just dropped Mercury 2, the first reasoning language (and code) diffusion model ever. It is 10x faster and the cheapest model for its quality. They're not quite at frontier like the Claude 4.6 / 5.3s of the world but they are reshapinging pareto-frontier for price/quality and latency/quality. If they get to frontier, they'd upend the current economics of large language models.

📝 The Permanent Underclass [not written with AI] Last week, I spoke to dozens of people still in or just out of college. Good ones too (Harvard, MIT and the like). They were anxious. They were promised that they just needed to study to get into a good college, graduate with a high GPA in a good major, get a high paying job in a big city, and eventually buy a house and start a family. But that promise broke. They all believe there is going to be a "permanent underclass" and they want to escape it. There is some truth to this. The reality is that there are fewer jobs for qualified graduates than ever before. And the ones that exist don't seem to set you up for a path to financial freedom. BigTech hiring has plateaued for 4 years now. In the last month, I've had several startups tell me unironically what you hear from X AI hype machines: "I really don't need to hire junior engineers right now, I'd rather spend the money on Claude Code." Other industries don't paint a rosier picture. There's a sense that most kinds of knowledge work at a desk will eventually be automated away. Many who have benefitted from the AI wave and technology in the last 10 years have made north of $10M while most students struggle to even break into a job that pays $100k a year. Every single student wants to "start a startup", not because they have an all-consuming itch to create, but because it feels like the only path to financial freedom. I don't know the systemic solution to all these problems. But if I were a student today, here's what I would do. — Time is the only limited resource left. You can't afford to waste it. Networking, events, and "talking about doing things" have limited value. Too many people spend cycles trying to appear and sound smart. Too much time on social media. Too much Netflix. Too many people dream about "building a company" without ever going deep enough into anything to have a real unique insight. You must protect your time like someone's trying to steal it. Treat time like actual money in your wallet. Every day, you need at least 4 hours of protected, alone, do-not-disturb time to do your personal work. This is your "work" time. — Exploit. Use 70% of your "work" time to focus on one deep area, consistently. This must be for 3 to 6 months at a stretch, minimum. Every single student I met was way too distracted. One day, they cared about X, the next about Y. They let their feeds, their friends and the news cycle decide what they cared about that day. You need to pick a lane and go deep. I'm not prescriptive about what this means. It doesn't have to mean "building something". It could be deep research into a topic. It could be answering a question like "Can I trace the history of every single important funded company for the last 25 years and figure out why they worked or didn't." Hell, it could be starting a podcast. But it has to be one thing. You can learn most of what you need from LLMs and actually reading the underlying sources. — Explore. Use 20% of your "work" time to zoom out and learn things outside your focus lane. One of these things should be playing with new AI tools. Don't hesitate to spend money here: this is the best investment you'll make. Technology is evolving far faster than the speed of coursework. And the more time you get with tools, the more prepared you will be for the future. Just block off time to experiment with all the features. This should feel like fun. Just play. With no goal. You can also use it to read books outside your area. The idea is to go wide. — Externalize. Use 10% of your "work" time to write. Use writing not to market your learnings and work to others, but simply to digest them. To make sense of them. To begin formulating a journal you can call back to later. Do it diligently. If you're embarrassed, that's fine. Don't publish it under your name, but publish it. Publishing is very different from writing an internal journal because giving it permanence means it has to pass your own bar for scrutiny. It's easy to write something lazy that you didn't understand. It's very hard to publish it. The prospect of shame is the forcing function to do a good job. This will over time serve as a way to remember your progress and what you actually did. It'll become the resume that actually matters. We live in a world where agency matters more than intelligence, but our systems, protocols and institutions have not evolved to keep up. I'd bet on this over networking sessions, 100s of unread job applications and wafer-thin pipedream startup ideas. http://x.com/i/article/20240061987822919…

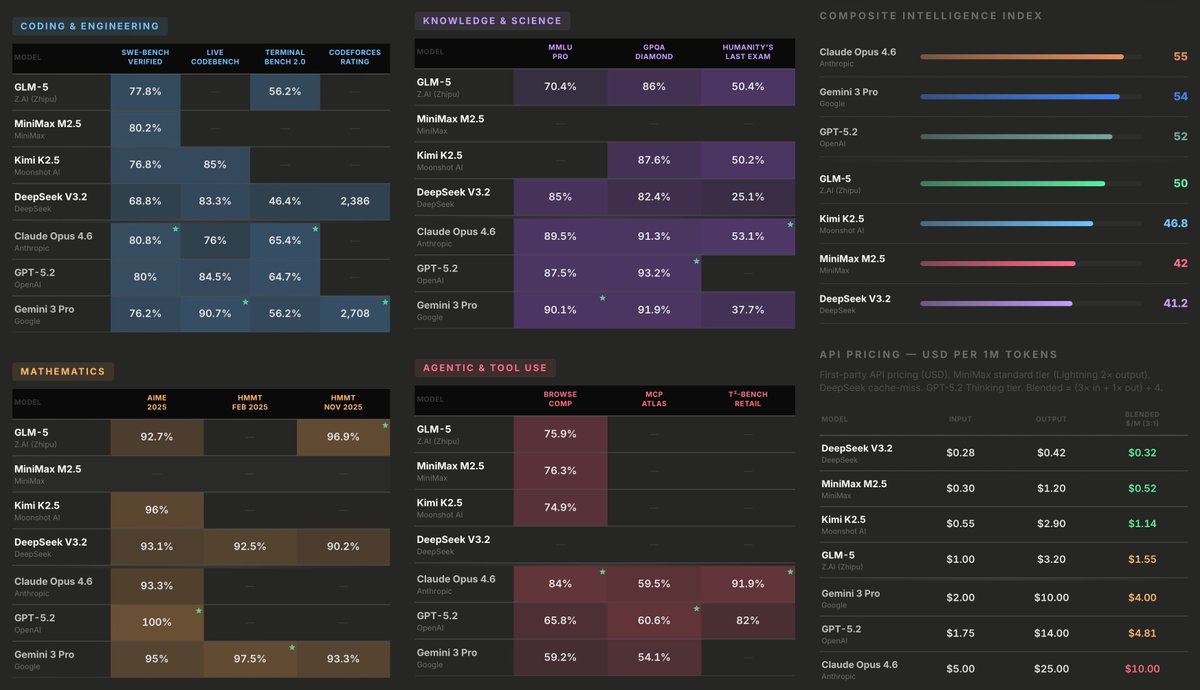

🚨Chinese AI labs just dropped 3 frontier open-source models ~3-20x cheaper than the US in the last week I made this benchmark + price cheatsheet with everything you need to know. Minimax M2.5: Ultracheap, great for coding Kimi K2.5: all-rounder, best for OpenClaw Zhipu GLM-5: Premium all-rounder