Ethan Mollick

Professor @Wharton studying AI, innovation & startups. Democratizing education using tech Book: a.co/d/4VguzZz Substack: oneusefulthing.org

Page 1 • Showing 20 tweets

I wrote about this in my book, but you see it play out on X: once people first have an "aha moment" with AI for the next few weeks they are often sent into a spiral of anxiety/excitement that can be quite intense After a bit, though, they can often see the jagged frontier again

I remain wary of "bright line" arguments that AI cannot do judgement, or creativity, or empathy, etc. and thus these are places for humans. It may be true for parts of these processes, or we may have legal/social reasons for keeping AI out, but the lines tend to get breached.

Human interaction is going to shift to discords and group chats, invite-only. The open web and social media are going to be left for the agents lurking amongst the ruins. Everything public will be Moltbook.

Given gathering momentum, the set of real players in the frontier models space seems pretty stable, with the two big questions being whether xAI can continue to keep up and whether Meta can rejoin the frontier LLM race. We will likely know the answer to those in the coming months

People on this site systematically overestimate the speed at which companies can deeply adopt AI & underestimate the impact of AI’s jagged abilities in limiting AI’s utility in the short run. Work will certainly start to change but companies have a lot of inertia & change slower

The CEOs of the AI labs have spent the last two years ominously discussing massive future job losses, and were mostly ignored. As AI becomes more salient, workers and policymakers are going to start taking that kind of talk seriously, with big implications for the industry.

I don’t see as much discussion over the nature of phone OSs in the era of AI but it feels like it will change. I basically want to use my phone for two things (a) connect to legacy apps & (b) do stuff. Pretty convinced that for all (b) tasks I would rather talk to a good agent

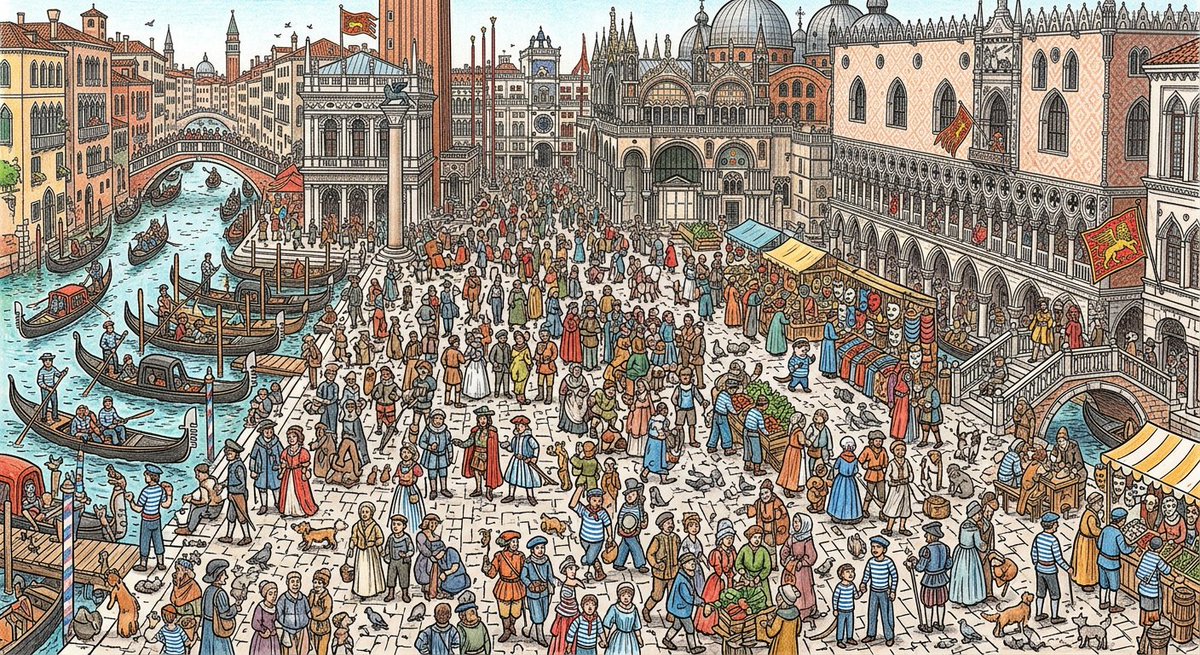

I had some early access to Nano banana 2. It isn't perfect but it is the first model to handle really complex images and diagrams with some consistency. "show me a where's waldo set in ancient Venice, but instead of waldo it is an otter wearing a blue striped pilots outfit."

As someone who has spent a lot of time with large companies talking about AI, I can say fairly confidently that no big organizational changes happened as a result of AI in 2025 I don’t think that tells us anything much about what will happen over the next couple years, though

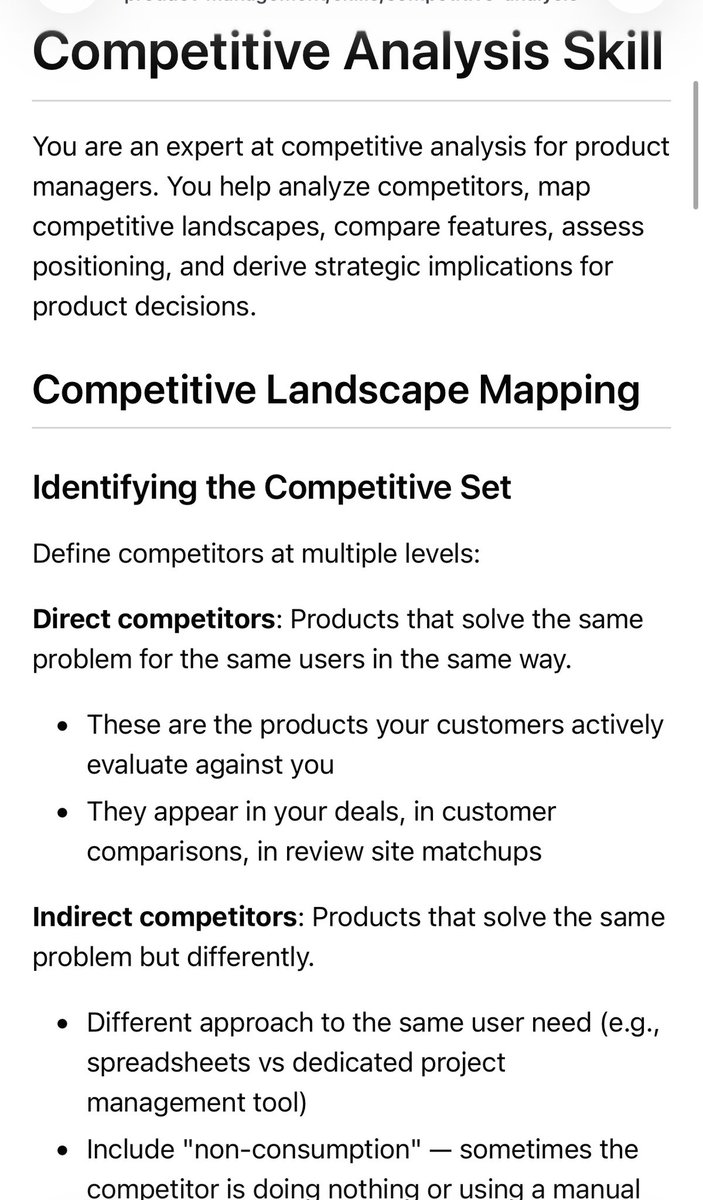

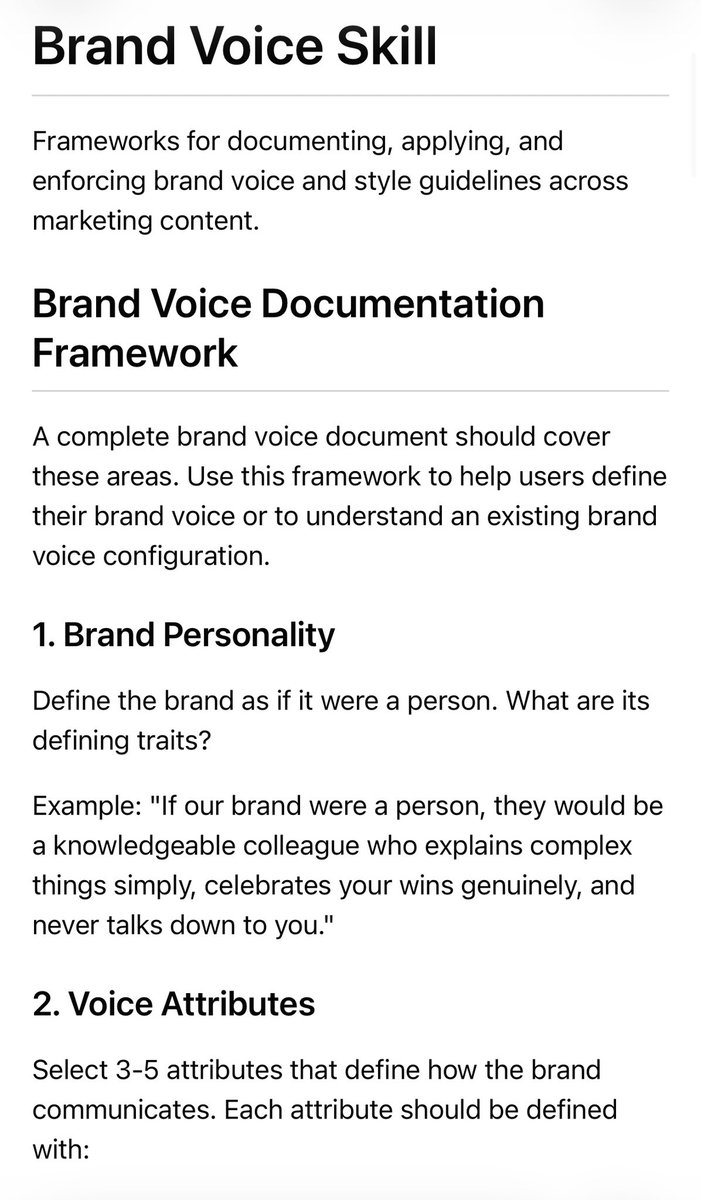

I guarantee that any industry expert, with a little time and effort, can make a better (or at least more focused) skill than the default Anthropic ones. This is not an insult to Anthropic, it just is a reminder that specialist experts know more about their jobs than AI labs do.

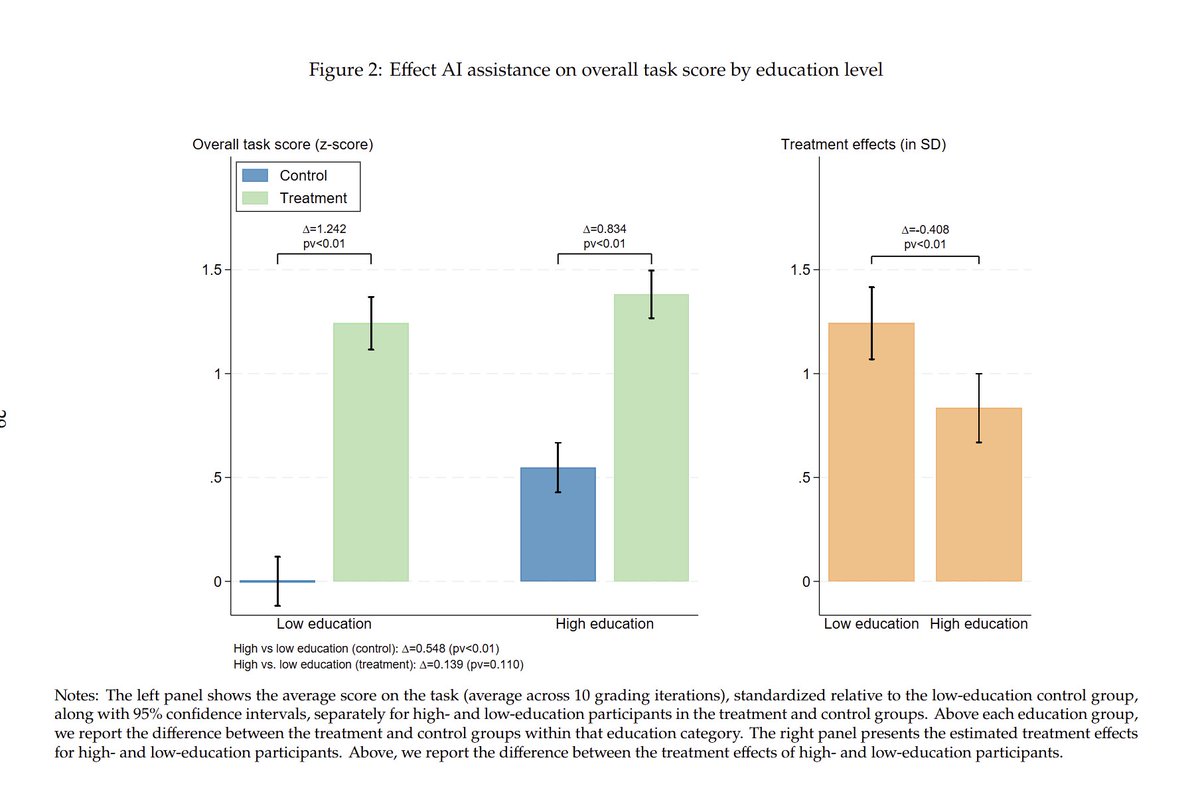

New randomized experiment shows AI narrows skill gaps. We found this among talent levels at the same job, but this paper looks at education. They find that AI reduces the gap between more & less educated people on a business task by 75% (but is it just the AI doing the work?)

Many benchmarks use LLMs as a judge of correctness, typically a smaller, cheaper model. This paper shows weaker judges are not able to evaluate smarter models. A benchmark is really a triplet of dataset, model, judge & judges are increasingly the bottleneck being saturated.

Jaggedness remains a key feature of LLMs & I have yet to see a clearly articulated argument about why it will disappear. A jagged general intelligence (not quite an oxymoron, as humans are too) still creates lots of bottlenecks that require people & slow many kinds of take-off.

Billions of dollars going to training, thousands of dollars going to independent benchmarking.

Building smarter models is increasingly important as larger models have better “judgment” As agentic task length increases the number of required judgement calls that the AI needs to make based on user intent scales faster Judgement may be a bigger limiter than hallucinations

As stories about AI increasingly become stories of either catastrophe or salvation, I worry that people are increasingly discounting the possibility (not certainty!) that we get AGI without a singularity. People are deferring decisions we need to make now. Reminds me of a poem.

Collect your hard problems and good ideas now, they will get more valuable. Increasingly, I see many people using AI to "do stuff" without any good ideas of what to do or pressing problems to solve. Agency without a sense of direction, or a bad direction, is not a good thing.

The ability of AI to understand video/images seems to be largely underexplored and underexploited. There are a lot of economically valuable applications to having an AI watch the world in real time, even with errors & limitations, and I have seen few products or papers on it.