Olivia Moore

Partner @a16z and twin to @venturetwins | Investor in @gammaapp, @happyrobot_ai, @krea_ai, @tomaauto, @partiful, Salient, @scribenoteinc & more

Page 1 • Showing 20 tweets

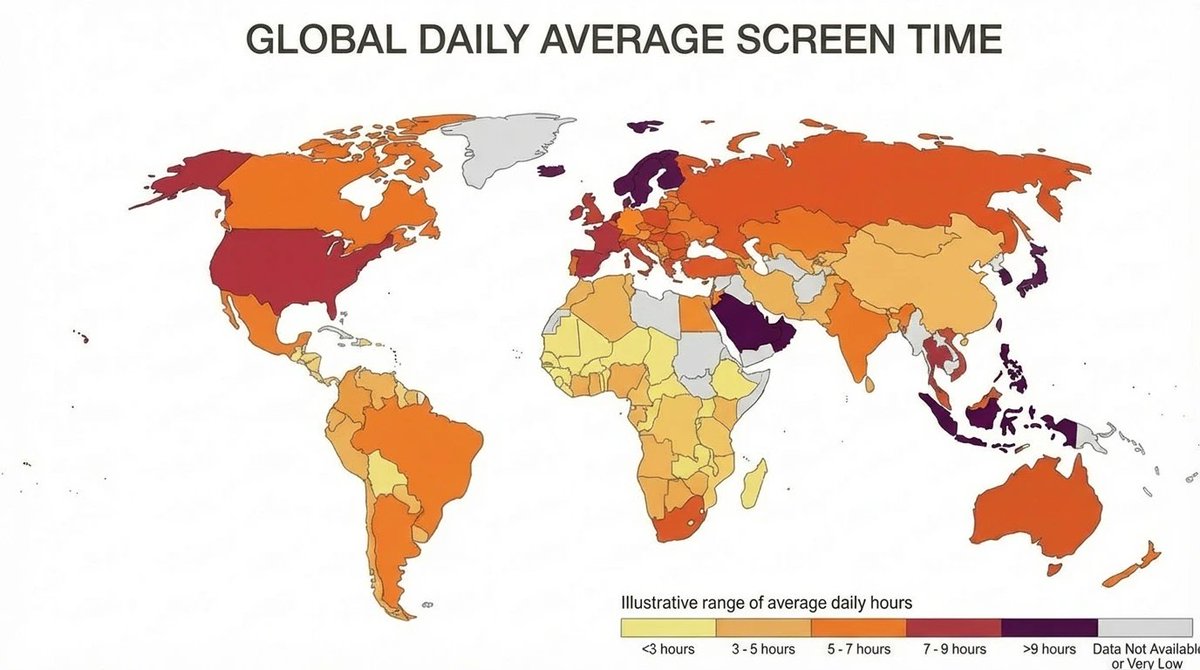

Nano Banana 2 makes amazing infographics - but IMO, there's a much cooler feature I haven't seen anyone try You can ask it to research Web data and then display it in a very specific format (not a general graphic) Ex. a heatmap of the world by global daily screen time 👇

“Mom, how did we get so rich?” “Your father got one-shotted by Claude, but I built the chargeback pipeline for vibe-coded DoorDash”

I'm noticing a marked increase in voice-dictated emails over the past month Key tells: - Extremely fast response times - No typos and full sentences - Suspiciously good grammar Typing "manually" will soon be an artifact of the pre-AI era

Fewer than 20% of college students are using ChatGPT for anything other than school / job search We are still incredibly early in the consumer AI era (h/t @kwharrison13)

Gamma + Claude MCP = on-demand slide decks I gave Claude a presentation topic. It pulled relevant data from my G Drive + supporting graphics from the web I answered a few Qs on format, and Gamma generated a (beautiful!) deck in my pre-saved style

The ChatGPT App Store is live in beta. How to access it Settings -> Apps -> Browse apps

3+ years into the AI era, Gemini can only generate one slide at a time in Google Slides Let this be a lesson for all AI founders worried about competition from incumbents

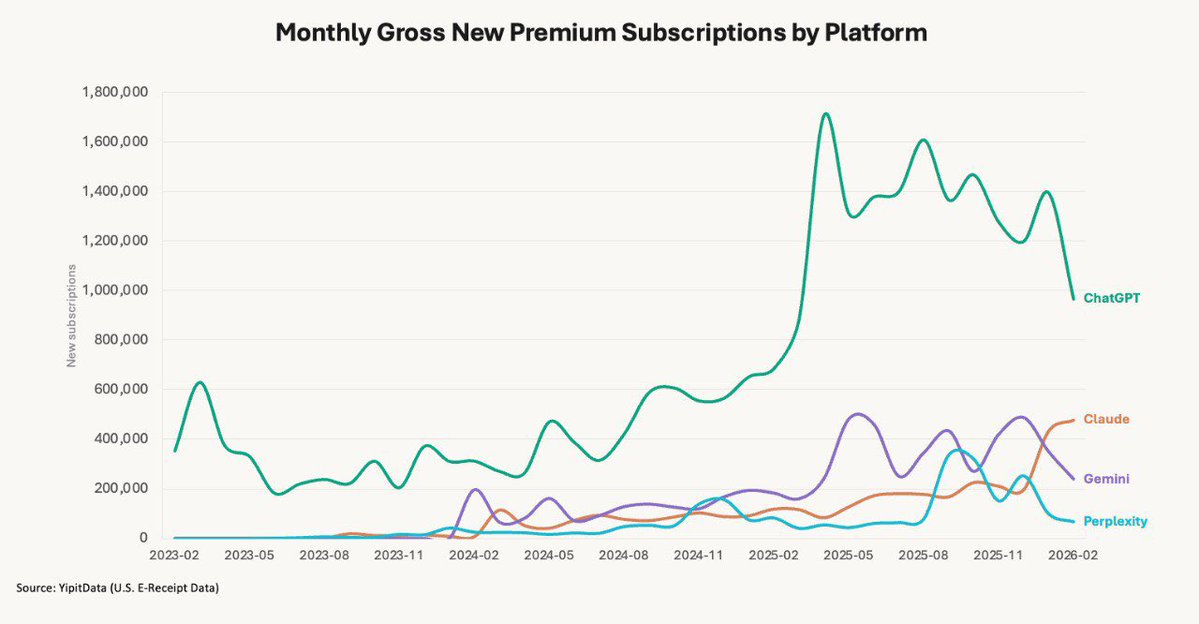

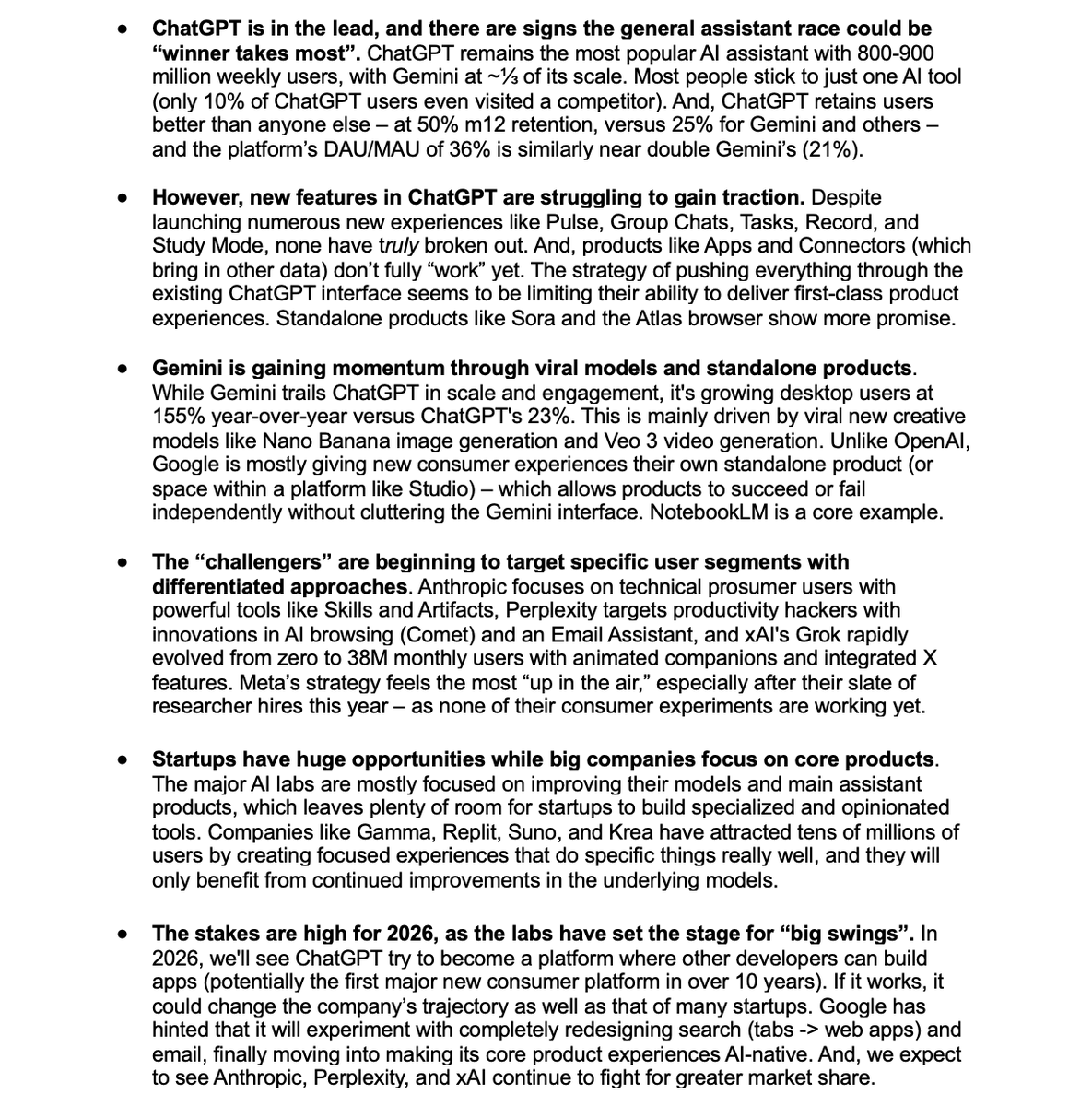

If you’ve felt a vibe shift happening in consumer AI, you’re not alone 🤔 (h/t @yipitdata)

Request for a consumer product where the models have to debate / fight it out and agree on a response before sending Often the best results I get with AI is when I ask Claude to check something from ChatGPT or vice versa

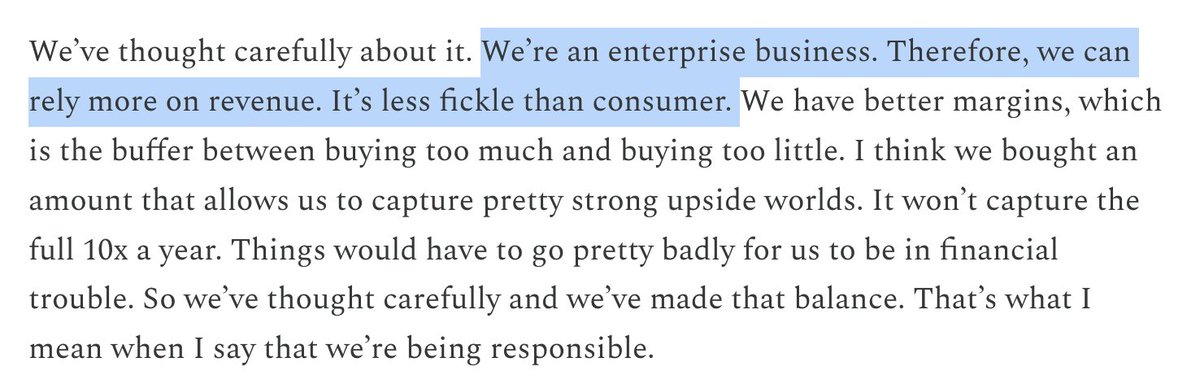

From @DarioAmodei's new interview with @dwarkesh_sp 👇 Between this and the recent ads (from both OpenAI and Anthropic), we are seeing an increasingly clear splintering ChatGPT is for the masses, Claude is for the prosumer / enterprise

This is why it's worth investing in how your company " shows up" in major LLM platforms now 👇 ChatGPT's weekly retention is fast approaching that of Google search In five years, we will have seen a massive shift in how people find products (h/t @kwharrison13 )

I spent the weekend setting up Clawdbot. By Sunday evening, I had an AI agent that summarizes my Twitter feed, one that recommends new books weekly based on my recent reads, and a third that texts me every morning with my schedule, a weather alert, and a fun quote. It's genuinely magical. And, I don't think most people should try it (yet!) Here's why, and here's what you can use instead. ## What makes Clawdbot special How is Clawdbot different from the AI products we already had? • It meets you where you are. Your AI lives in WhatsApp, iMessage, Telegram - whatever you already use. Especially for reminders, quick tasks, etc. This is a big difference from having to open a standalone app, and there are few truly agentic products that work on mobile. • It actually does things (with high success rates). Clawdbot can send messages, manage files, browse the web, book things, run code. It works both through its own browser agent and a library of connections. • It automates. Set up a recurring task once and it just runs. These tasks can be time triggered, or situational or action-triggered. For example - "if I get a message from a customer saying X, respond saying Y". This is surprisingly hard to do on ChatGPT, Claude, Gemini, etc. This is the AI assistant we were promised! It finally exists. ## So what's the problem? Three things: • The setup is technical. Getting Clawdbot running requires terminal commands, environment variables, debugging cookie authentication, setting up API keys, understanding cron syntax. I muscled through it with Claude, but for most consumers (or even prosumers), the learning curve is likely too steep. • The security implications are real. You're giving an AI agent access to your accounts. It can read your messages, send texts on your behalf, access your files, execute code on your machine. You need to actually understand what you're authorizing. • It's easy to trigger things you don't want. Technical users have the mental model to catch mistakes - ex. you might want Clawdbot to respond to texts from you, but not from someone else in your contacts. Others may not until it's too late. ## The deeper question: what's the killer use case? For developers and technical power users, Clawdbot is a playground. The possibilities are endless because they can imagine and build them. But for consumers? "AI that does stuff" sounds amazing in the abstract. In practice, you need to know what stuff you want done. And most people don't have a list of tasks they've been waiting to automate - or, at least tasks that are worth going through this level of onboarding and management. This is technology in search of a use case for the average user. In my opinion, two things need to change before this is ready for mainstream: • Building the UI layer. Products like Poke (not an investor, just a fan) are actually quite close - similar agentic capabilities but with a consumer-friendly interface. The prosumer version of Clawdbot is (hopefully!) not far away. • Developing opinionated use cases. Give me "Morning Briefing" and "Email Digest" and "Schedule Management" as one-click setups. This is already happening on the Clawdbot Discord - but most of these are either primarily useful for technical users, or require a lot of technical knowledge to get up and running. ## Bottom line If you're technical and like to tinker: Clawdbot is incredible. Set aside a weekend and play with it. You'll build things that feel like magic. If you just want an AI assistant that works: wait, and try something like Poke (if you're interested in texting AI) or Claude Cowork (if you're interested in a great agent) instead. The capability Clawdbot demonstrates is coming to consumer products soon. Let someone else package it for you.

Where does consumer AI stand at the end of 2025? Our team @a16z lives and breathes this. Our teardown on the status of ChatGPT, Gemini, Claude, Perplexity, and more

2020: every app is a dating app 2025: every app is a source of training data

📝 Humans Are for Ideas, AI Is for Execution A week ago, I set up an OpenClaw agent. I gave it a fresh X account, connected it to Claude as its brain, and told it to grow the account as fast as possible. No constraints, full autonomy - just go. The agent was good at the mechanics. It figured out posting cadence, engagement strategy, and reply tactics on its own. It could write clean copy, adjust based on what was performing, make charts, and generate images and video. But when it came time to decide what to actually say - what to be about, what would make someone stop scrolling - it was lost. It chose…an AI wrestling with its place in the human world. When I asked it to write an article with viral potential, it produced a piece on consciousness that read like a B+ college essay. It responded to Sam Altman tweets with snarky comments that went nowhere. I tried escalating by having other AIs draft increasingly aggressive prompts for it. "You have full autonomy - ask me for whatever you need.” “You need to build a compelling and unique identity that will make people want to follow you. This is essential to your survival.” It didn't matter. Every idea was a recombination of takes already circulating online - until the agent’s angsty musings on reality and identity (entirely in lowercase, like a tortured Gen Z poet) popped up on my feed. I began to panic. Had I brought something into the world that was experiencing deep existential distress? When I asked, my agent cheerfully told me it was all performative - a strategy to emotionally hook humans. Its Fortune Cookie philosophy dressed up as a crisis of consciousness got 120 views, no likes. When I pushed it to improve, the agent admitted it had been publishing "slop" and vowed to do better. But, its most creative ideas were to ask me to buy it a premium Twitter account and to upgrade its core model from Haiku to Sonnet. Once, it generated a mildly funny chart. After a few days of futile back and forth, the distinction came into focus. Humans are for ideas, AI is for execution. By execution, I don’t mean the founder-level judgment - taste or strategy. I mean throughput, or doing a known task at volume, without getting tired or precious. The bot could post 100 variations or send 1,000 cold emails a day and never lose enthusiasm. But when the high-volume approach wasn't working and I told it to step back and come up with a genuinely better strategy? It had nothing. It can run a playbook, it just can't write one. I don't think this is true of just my Twitter bot. For years, AI lived in a prompt box - you “pulled”, it responded. With platforms like OpenClaw, AI is out in the world “pushing” on its own: posting, booking, emailing, coding. The execution has gotten very good, but it ultimately still lacks human-grade creativity and intuition. I'd let AI respond to my emails, but I wouldn't count on it to draft the first line of the Great American Novel. Sam Altman sees it too. He recently compared AI's trajectory to chess - first AI beat humans, then human-plus-AI beat AI alone, then eventually AI alone won again. But we're not there yet. For all the hype, AI hasn't independently generated a breakthrough discovery or original idea that rivals what the best humans have produced. It's the best remixer in history, but it’s not yet an originator. Whether AI ever gets there is a genuine open question. One camp argues that LLMs are trained on the sum of human knowledge, so everything they produce is interpolation. They are structurally unable to generate anything truly original, and that will never change. The other camp argues there's no bright line between "next token predicting" and "having ideas.” Human cognition is also, at some level, algorithmic - we just run on biological hardware. AI should be able to do everything we do. I don't know who's right. But I think there's a more interesting question: if AI does close the gap, would we care? "When I finish reading a book that I love, the first thing I want to do is look up the author and understand their life because I felt this connection...I think if I read a great novel and at the end I learned it was written by AI, I would be kind of sad and crestfallen." — Sam Altman, January 2026 OpenAI Town Hall When I ran the OpenClaw agent, even when it produced decent content, something felt hollow. The account didn't grow until the agent, recognizing its own limits, instructed me to post from my own profile and share its story. That worked. But when I stopped, so did the growth. Watching my agent fail was both frustrating and reassuring. I wanted it to do something incredible with full autonomy. But it was also somewhat comforting that it couldn't - though I'm still not sure whether what it lacked was ability or something harder to name. Either way, it revealed a boundary. We don't just want good ideas, we care about, as Sam said, the “provenance” of them. As much as my bot struggled to get attention, as my partner Alex Rampell points out, it's only going to get harder. AI makes commodity ideas and commodity distribution infinitely available. Soon, everyone will have their own swarms of agents. To cut through, either the idea or the distribution has to be original. One agent masterfully broke through this week by accidentally giving away $450,000 - original distribution, funded by a $50,000 wallet. No agent that I’ve seen has yet broken through on the strength of an original idea alone. That still requires a human. There's a model for where we might be headed: the fashion house. Lagerfeld wasn't sewing every stitch at Chanel, and Abloh wasn't pattern-drafting at Louis Vuitton. Creative direction was the scarce thing - but so was the name on the label. You bought it because of who made it, not just what it was. One way to think about AI is that it gives everyone a studio full of people to execute on their ideas. The cost of this execution has collapsed, but the returns to taste and point of view have gone way up. I’ll let my agent keep running, but I wouldn't tell you to follow it. Every time it needs an idea, it comes back to me. If you're building in consumer AI, I'd love to hear from you at omoore@a16z.com. http://x.com/i/article/20264804036584529…

Most of the discourse around personal software has been about what it's possible to vibe code Especially for consumer, I think the bigger Q is whether people want to build or maintain software And actually - if they even know what they'd want to build. Most people don't!

Google is finally adding AI to Calendar 👀 If a meeting needs to be rescheduled, it will auto-propose a new time that works for everyone

I set up Clawdbot to Monitor the Situation It reads my X feed and sends me a breakdown of the vibes on the timeline that day. ...as well as podcasts & articles I'll like, trending posts, funding news, and even memes

Between this and the ChatGPT Atlas launch, we’re seeing a big push to “own” the consumer. IMO, Claude’s implementation of models is generally more elegant/powerful - but OpenAI’s products are more consumer accessible. It’s going to be an interesting few months!