Architecture

Frequently Asked Questions

Ruby on Rails is probably the most token-efficient way to write a real web app together with agents that doesn't immediately fall apart with security holes and unscalable decisions. https://rubyonrails.org

Corollary: this is the best possible time for semi-technical non-engineers to catch up. Over the last 3 months, I went from someone who was a "technical PM" to ridiculously proliferous at shipping massive amounts of (what appears to be) quality code. Systems thinking + domain expertise are now FAR more important than the syntax of individual lines of code. In fact... getting caught up in the lines of code might actually be a hinderance. For context: I learned C++ in high school, then I was an Excel-monkey in my consulting days (but building complex models over 10-20 weeks that were basically data applications). I forgot any form of syntax over the last 20 years but the other experiences meant I built strong foundations in object-oriented programming, proper abstractions, systems thinking, and data structures. And I've spent most of my career in Finance, Biz Ops, Legal, HR... which means that I can be monstrously productive in building software for corporate finance teams. How productive? 500k+ LoC touched and hundreds of commits, in 11 weeks. Yes, yes, I know lines of code is not a KPI to optimize, but someone going from 0 to that order of magnitude should still paint a picture. And this isn't slop. It's reviewed by actual engineers on the team, and we're rigorous about PR reviews (in the earlier days I had to redo PRs from scratch many, many times because it wasn't good enough). The overall process works beautifully, because I have the multi-year product roadmap and the codebase architecture in my head. I'm able to consider future needs, and execute on a months-long roadmaps... in days. I genuinely feel like I just got access to a video game I've been yearning to play, for a long time... and somehow my copy came with God mode baked in. (This is funny because Andrej Karpathy is actually a god in this space and I'm probably just getting used to the power of building software, and just a teeny bit of Dunning-Kruger to boot...) But still. I share this because I chatted a few days ago with a close friend who's a really sharp systems thinker but not an engineer, who said "I feel like I missed the wave, it's too late for me to pick up vibecoding." I told him that I only began working in our codebase in earnest in early October. That things are changing so fast that those who previously learned to code are having to sprint really hard to keep up, too. That knowing the line-by-line syntax isn't the most critical for *most* products. That the game is changing every week... but that's a huge gift people like us, because it gives us a place to stand in order to move the world. And most of all, that if you're a systems thinker, have good taste in UI/UX, have domain expertise, and — I think this is somewhat important — that you love software... there is absolutely nothing stopping you from building something amazing. I *love* software. I thought several times about taking a detour to learn how to code, but life just never slowed down enough. Ironically, it was the practice of coding speeding up that gave me the opportunity to get on board. However long this lasts, I feel so fortunate to be able to actually get in there, and build something that I love.

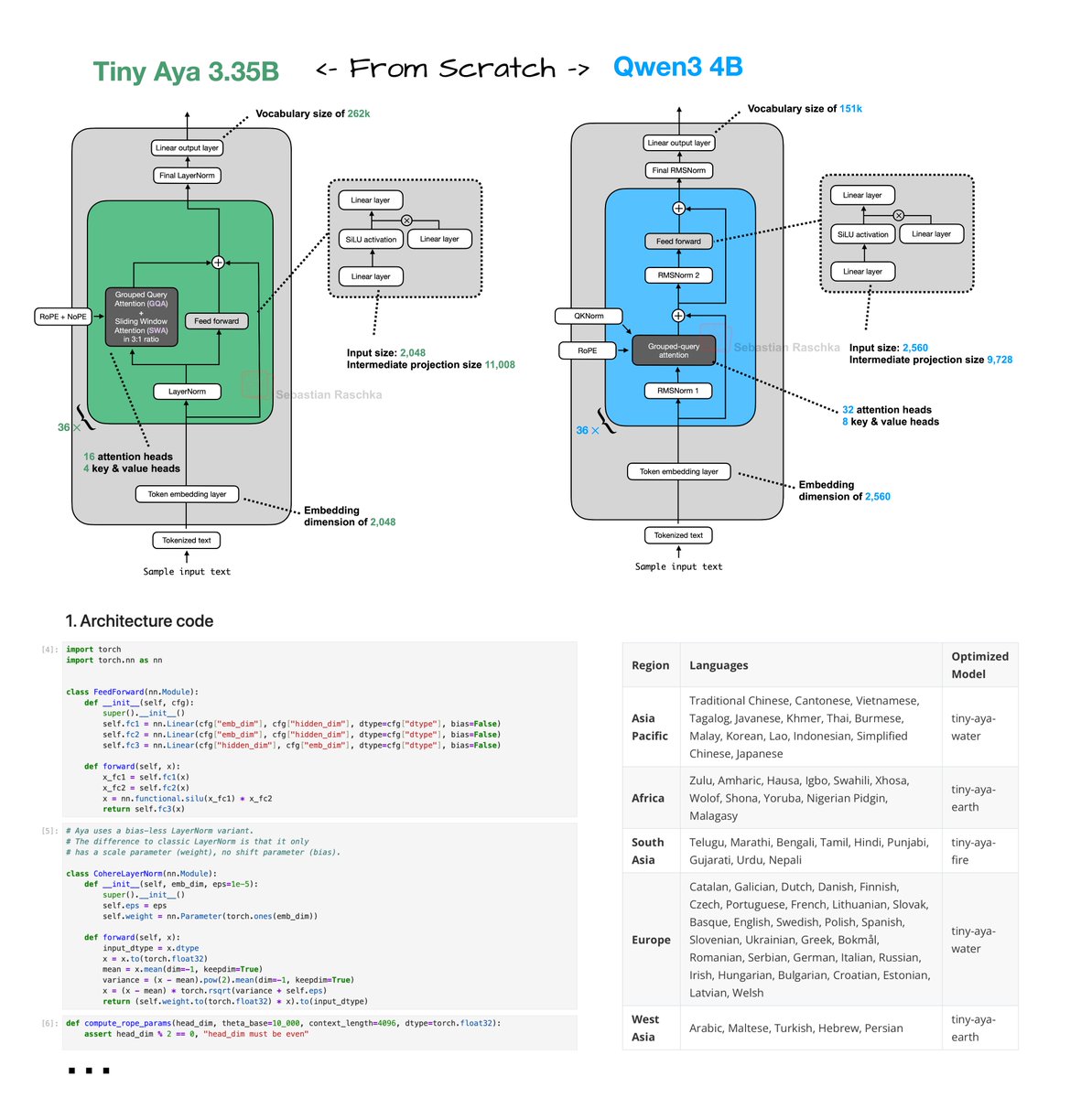

Tiny Aya reimplementation From Scratch! Have been reading through the technical reports of the recent wave of open-weight LLM releases (more on that soon). Tiny Aya (2 days ago) was a bit under the radar. Looks like a nice, small 3.35B model with strongest multilingual support of that size class. Great for on-device translation tasks. Just did a from-scratch implementation here: https://github.com/rasbt/LLMs-from-scrat… Architecture-wise, Tiny Aya is a classic decoder-style transformer with a few noteworthy modifications (besides the obvious ones like SwiGLU and Grouped Query Attention): 1. Parallel transformer blocks. A parallel transformer block computes attention and MLP from the same normalized input, then adds both to the residual in one step. I assume this is to reduce serial dependencies inside a layer to improve computational throughput. 2. Sliding window attention. Specifically, it uses a 3:1 local:global ratio similar to Arcee Trinity and Olmo 3. The window size is also 4096. Also, similar to Arcee, the sliding window layers use RoPE whereas the full attention layers use NoPE. 3. LayerNorm. Most architectures moved to RMSNorm as it's computationally a bit cheaper and performs well. Tiny Aya is keeping it more classic with a modified version of LayerNorm (the implementation here is like standard LayerNorm but without shift, i.e., bias, parameter).

🆕 Spec-Driven Development https://youtube.com/watch?v=HY_JyxAZsiE Al Harris, Lead Dev of @kirodotdev, did a full workshop on Spec Driven Development at AIE CODE and we are excited to release it for the first time!

youtube.com

- YouTube

Here are my 2026 predictions for how AI will change software: - An agent-native software architecture. Most new software will just be Claude Code in a trench coat—new features are just buttons that activate prompts to an underlying general agent. - Designers get superpowers—and become superstars. When software is cheap to build, designers become powerful. They can finally make anything they want without waiting on engineers—and if you can make a beautiful experience you’ll stand out in a sea of vibe coded apps. - Agentic engineering becomes a new discipline. There is a new skill of software engineering emerging that is different from vibe coding and different from traditional engineering that uses AI. It is truly AI native engineering from professional developers who don’t ever look at or write code. They’ll be the most productive category of engineer in 2026, it will become a new discipline in itself. - AI training that indexes on sense of self. To achieve true autonomy, AI agents will need to run for long stretches of time without constant supervision—and to this end, we’ll see new training approaches that focus on giving agents their own sense of self, goals, and directions—we’ll start to hit the limits of people-pleasing, sycophantic AIs. Want more? I sat down with @every COO @bran_don_gell to trade our 2026 predictions and reflect on @every’s banner year. If you want to know what comes next watch below. Timestamps: Introduction: 00:01:05 Reflections on Every’s growth over the past year: 00:01:34 What changes when a company grows from 20 people to 50: 00:09:38 How “agent-native architecture” will change software in 2026: 00:11:55 Why designers are slated to become power users of AI: 00:17:13 The new kind of software engineer that will direct AI agents: 00:23:24 Why the next wave of AI training will focus on autonomy: 00:33:42

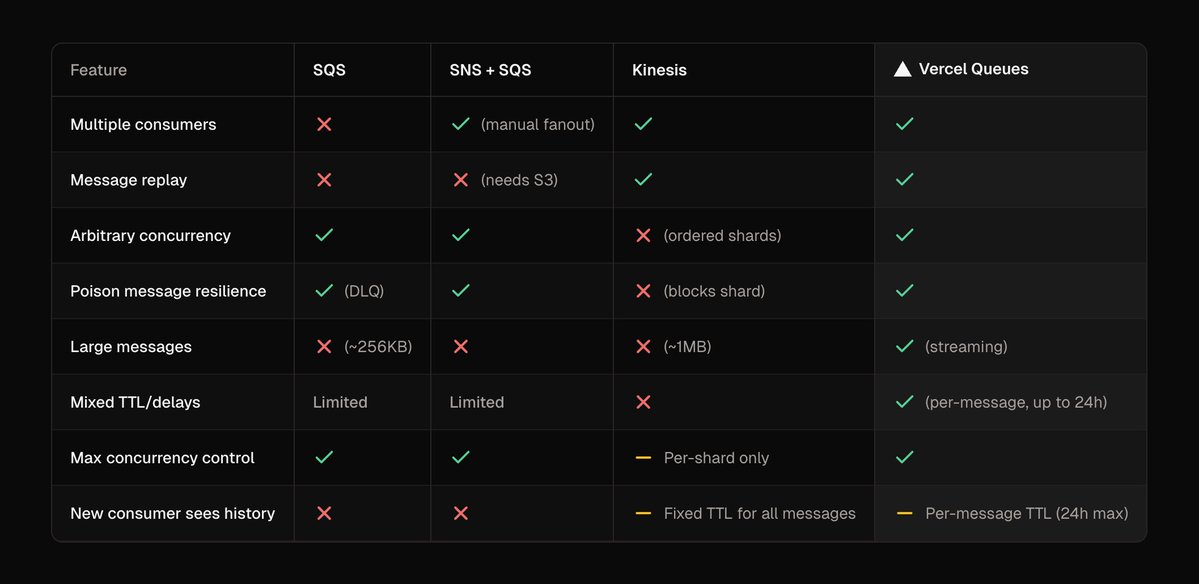

Vercel Queues learns extensively from its predecessors and peer primitives in the cloud ecosystem. The brand is "Queues" but the implementation is durable event streaming. We settled on an API that's very simple (2 key methods!), and yet insanely powerful… You can use it as-is, but Queues also serve as a foundation for higher-level DX tools. For instance, Workflow (http://useworkflow.dev) on Vercel is implemented via Queues. For Python folk for example, Celery could be supported transparently with our serverless queues as its backend 🤤

Cloudflare suffered a service outage on February 20, 2026. A subset of customers who use Cloudflare’s Bring Your Own IP (BYOIP) service saw their routes to the Internet withdrawn via Border Gateway Protocol (BGP). Here's the breakdown of what happened, including our logic for the fix and long-term architectural improvements. https://cfl.re/4ru93tz

Cloudflare outage on February 20, 2026

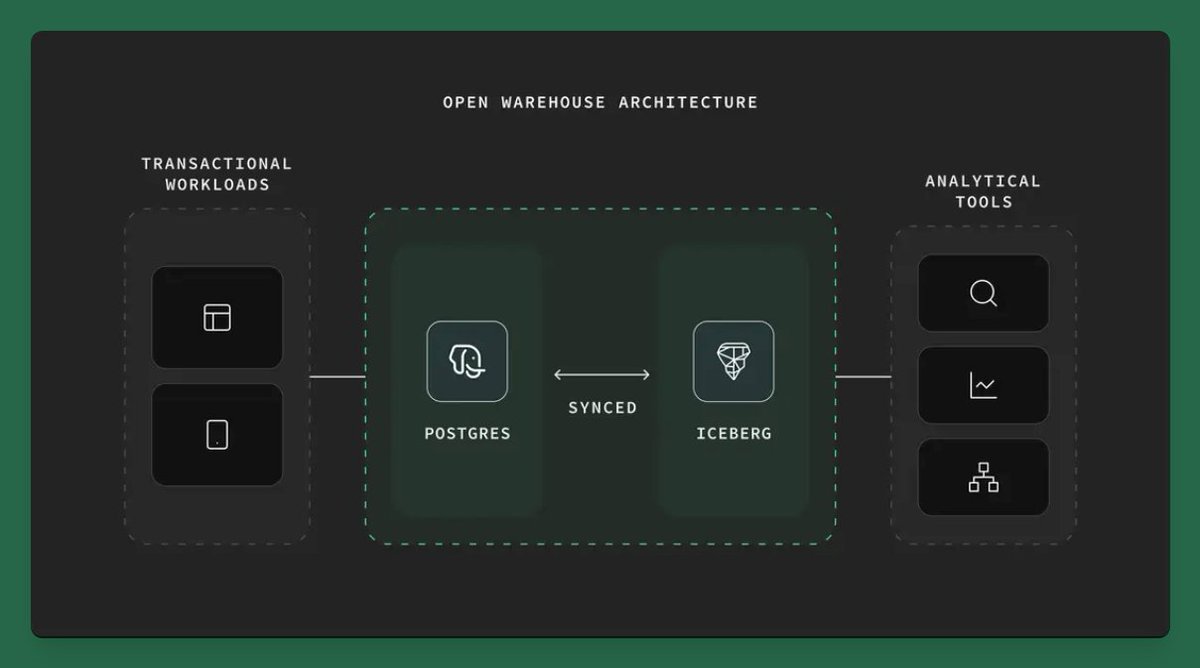

We will work in the open as we develop the Open Warehouse Architecture and the broader Postgres + Analytics vision > http://supabase.com/blog/hydra-joins-sup…

We have open-sourced our new 𝕏 algorithm, powered by the same transformer architecture as xAI's Grok model. Check it out here: https://github.com/xai-org/x-algorithm…

Microservices is the software industry’s most successful confidence scam. It convinces small teams that they are “thinking big” while systematically destroying their ability to move at all. It flatters ambition by weaponizing insecurity: if you’re not running a constellation of services, are you even a real company? Never mind that this architecture was invented to cope with organizational dysfunction at planetary scale. Now it’s being prescribed to teams that still share a Slack channel and a lunch table. Small teams run on shared context. That is their superpower. Everyone can reason end-to-end. Everyone can change anything. Microservices vaporize that advantage on contact. They replace shared understanding with distributed ignorance. No one owns the whole anymore. Everyone owns a shard. The system becomes something that merely happens to the team, rather than something the team actively understands. This isn’t sophistication. It’s abdication. Then comes the operational farce. Each service demands its own pipeline, secrets, alerts, metrics, dashboards, permissions, backups, and rituals of appeasement. You don’t “deploy” anymore—you synchronize a fleet. One bug now requires a multi-service autopsy. A feature release becomes a coordination exercise across artificial borders you invented for no reason. You didn’t simplify your system. You shattered it and called the debris “architecture.” Microservices also lock incompetence in amber. You are forced to define APIs before you understand your own business. Guesses become contracts. Bad ideas become permanent dependencies. Every early mistake metastasizes through the network. In a monolith, wrong thinking is corrected with a refactor. In microservices, wrong thinking becomes infrastructure. You don’t just regret it—you host it, version it, and monitor it. The claim that monoliths don’t scale is one of the dumbest lies in modern engineering folklore. What doesn’t scale is chaos. What doesn’t scale is process cosplay. What doesn’t scale is pretending you’re Netflix while shipping a glorified CRUD app. Monoliths scale just fine when teams have discipline, tests, and restraint. But restraint isn’t fashionable, and boring doesn’t make conference talks. Microservices for small teams is not a technical mistake—it is a philosophical failure. It announces, loudly, that the team does not trust itself to understand its own system. It replaces accountability with protocol and momentum with middleware. You don’t get “future proofing.” You get permanent drag. And by the time you finally earn the scale that might justify this circus, your speed, your clarity, and your product instincts will already be gone.

.@avoice_ai helps architects automate the boring but important paperwork behind every building project, saving firms 15 hours per architect per week. Congrats on the launch @chawinasava and @chawitasava! https://ycombinator.com/launches/PQC-avo…