Technology

Frequently Asked Questions

Introducing Nano Banana 2, our best image model yet 🍌🍌 It uses Gemini’s understanding of the world and is powered by real-time information and images from web search. That means it can better reflect real-world conditions in high-fidelity. Check out "Window Seat," a demo using Nano Banana 2’s world understanding to generate more accurate views from any window in the world, pulling live local weather info with 2K/4K specs. The precision is mind blowing. Rolling out today as the new default in the @Geminiapp, Search (across 141 countries), and Flow + available in preview via @GoogleAIStudio and Vertex AI. Also available in Google @Antigravity.

I meet a lot of founders who are worried by the rapid rate of technological change. They shouldn't be. It may feel uncomfortable, but techno-turbulence is net good for startups. They're much more likely to adapt successfully to some big change than incumbents are.

Ben Horowitz on the infrastructure behind the AI economy: "Crypto is the natural money for AI because it’s internet-native money." "AI is global. Crypto is global." "There needs to be not just a ledger of money, but probably a ledger of truth for AI to really fulfill its potential." "I think people are probably underestimating how crypto and AI work together to form the AI economy." "Networks and computers tend to grow together, and I think that AI is obviously a new kind of computer and crypto is a new kind of network." @bhorowitz on Moonshots with @PeterDiamandis

I'm Boris and I created Claude Code. I wanted to quickly share a few tips for using Claude Code, sourced directly from the Claude Code team. The way the team uses Claude is different than how I use it. Remember: there is no one right way to use Claude Code -- everyones' setup is different. You should experiment to see what works for you!

.@GenAstronautics builds autonomous robotics for space. In microgravity, proteins crystallize without defects and semiconductors form without flaws, but astronaut time is scarce. They are enabling scale for the work that can't be done anywhere else. Congrats on the launch, @BramSchork and @ShiboZhou! https://ycombinator.com/launches/PY8-gen…

the truth is no matter how hard you try you’ll never be able to keep up with 100% of what’s going on in AI right now there’s just too much action right now

Excited to announce Claude for Open Source ❤️ We're giving 6 months of free Claude Max 20x to open source maintainers and core contributors. If you maintain a popular project or contribute across open source, please apply! https://claude.com/contact-sales/claude-…

Claude for Open Source | Claude by Anthropic

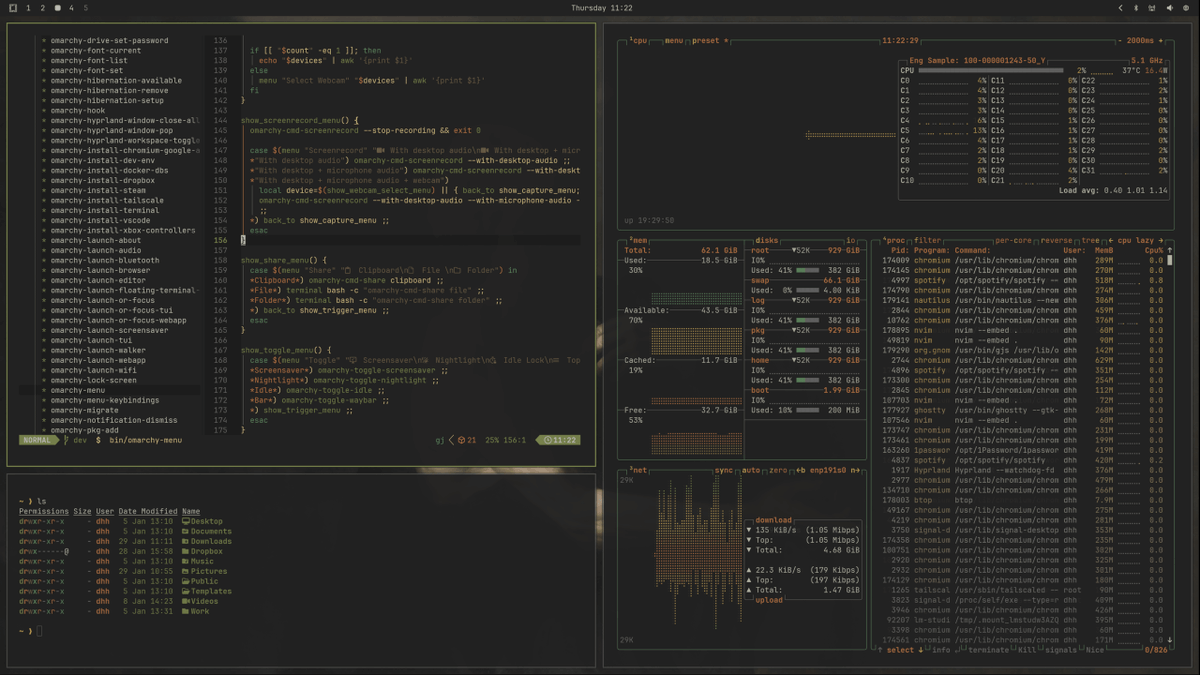

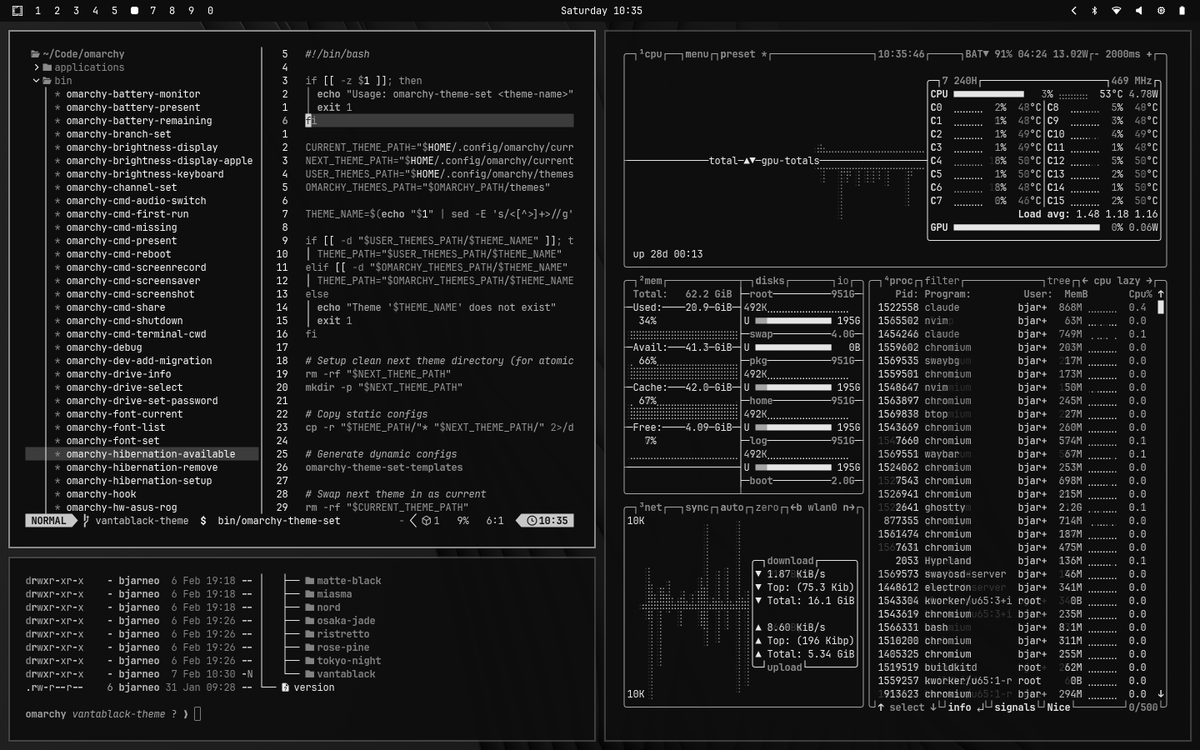

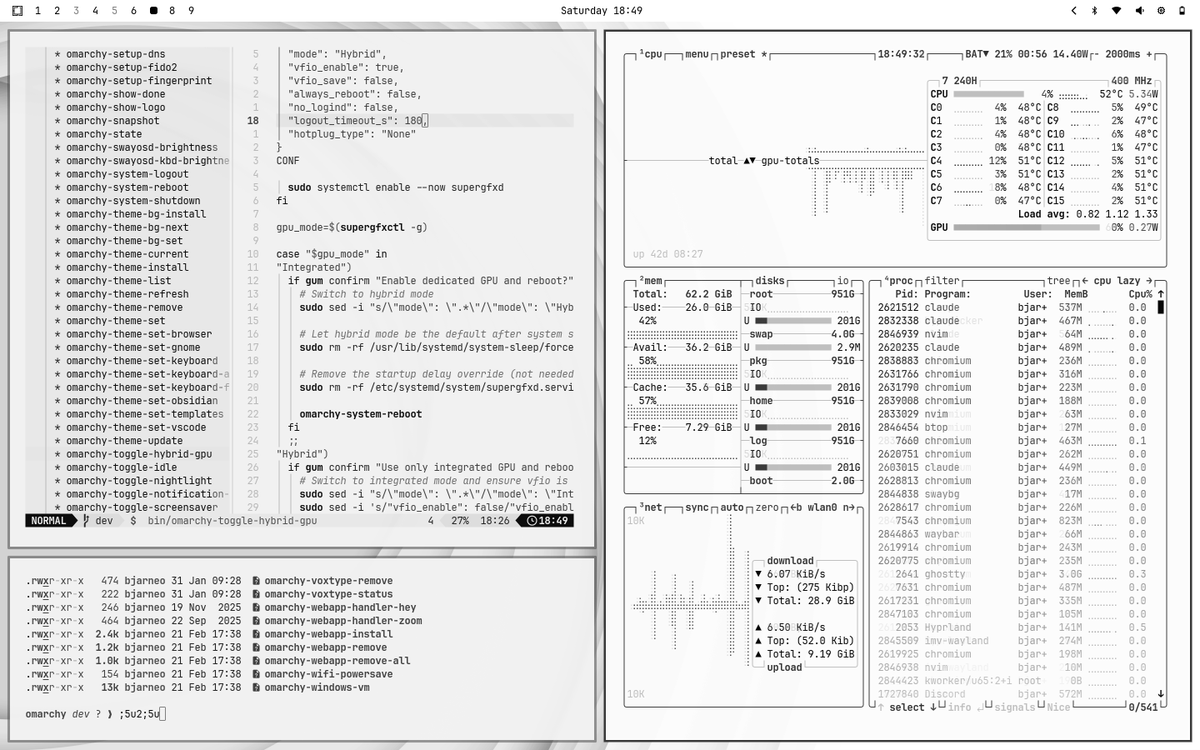

Omarchy 3.4 is out! Massive release with 61 contributors, three new themes, tailored Tmux, new screenshot flow, new agent features (claude by default + tmux swarm!), keyboard RGB theme syncing, and a million other things. https://github.com/basecamp/omarchy/rele…

"There’s at least a reasonable chance that 2026 Q1 will be looked back upon as the first quarter of the singularity." Stripe CEO Patrick Collison: "There’s been a phase transition in 2025." "There are many more businesses getting started and the average, the median business is in fact performing better." "Looking at real purchasing behavior on Stripe… end of ’25, beginning of ’26 is when I feel like we’re really starting to see it." @patrickc with @collision on @tbpn

New @openclaw beta bits are up! External Secrets Management (openclaw secrets), CP thread-bound agents (first-class runtime), web socket support for codex and loads more! https://github.com/openclaw/openclaw/rel…

Releases · openclaw/openclaw

More than a billion images generated later, we love seeing what you create with Nano Banana. Today, we’re inviting more people to join in on the fun with the launch of 🍌Nano Banana 2🍌 This state-of-the-art model is exceptionally good at things like: — Creating images that tap into the Gemini model’s real-world knowledge base + are powered by information and images from web search to more accurately render specific landmarks, locations, objects, and beyond — Adapting creative assets for global markets with regionally relevant localization — Dynamically editing aspect ratios and upscaling to 2k and 4k resolutions — Generating multiple cohesive images that fit together in one world, story, or concept

Sammy Azdoufal (@n0tsa) recently thought it might be fun to see if he could control his new DJI robotic vacuum with his PS5 controller. Within a few hours, he had the ability to both see and hear inside 7,000 DJI vacuum owners' homes, and control the devices with super low latency. Sammy discovered the bug when he was trying to program the vacuum to make crying noises when it reached 30% battery. While reverse engineering the DJI Home app so he could figure out his vacuum's battery status, DJI sent him data on all existing DJI vacuums of that model. Sammy then contacted his friend, who also had a DJI vacuum, and quickly found he could both see and hear his friend through his DJI vacuum, as well as control it. Sammy says DJI hasn't fixed the bug completely, and that being able to see through the vacuums is still possible. Here's his full breakdown of finding the bug:

ShortKit lets every app roll their own TikTok-quality feed. Built by a former YT infra engineer, ShortKit's managed SDKs and video infra lets teams roll best-in-class short form video experiences, without needing their in-house video eng teams. Congrats on the launch, @neilbhammar and @michaelmahersel! https://ycombinator.com/launches/PXQ-sho…

We’re launching Nano Banana 2, built on the latest Gemini Flash model. 🍌 It’s state-of-the-art for creating and editing images, combining Pro-level capabilities with lightning-fast speed. 🧵

Say hello to Nano Banana 2, our best image generation and editing model! 🍌 You can access Nano Banana 2 through AI Studio and the Gemini API under the name Gemini 3.1 Flash Image. We are also introducing new resolutions (lower cost) and tools like Image Search!

We've identified, responsibly disclosed, and confirmed 2 critical, 2 high, 2 medium, 1 low security vulnerabilities in Cloudflare's vibe-coded framework Vinext. We believe the security of the internet is the highest priority, especially in the age of AI. Vibe coding is a useful tool, especially when used responsibly. Our security research and framework teams are extending their help and expertise to Cloudflare in the interest of the public internet's security.

The one skill everyone who uses AI needs to master in 2026 Meta-prompting: using an LLM to generate, refine, and improve the very prompts you use to get work done. https://garryslist.org/posts/metaprompti…

Metaprompting is a skill everyone who uses AI needs to master in 2026