We're proud to support @LACMA's Art + Technology Lab—a program that empowers artists to prototype ideas at the edges of art, science, and emerging technology. The 2026 call for proposals is open to artists worldwide. Grants up to $50K. Apply by Apr 22: http://lacma.org/art/lab/grants

Art+Tech—Call for Proposals 2026

World Labs CEO Fei-Fei Li: Language alone is a lossy representation of the physical world. "Just a simple meal of making pasta... one could imagine using language to describe let's say about 15 minutes or 20 minutes of that process. But it’s still a lossy representation." "The nuance of how you cook the sauce, how you put the pasta in the water, what the pasta [does] in the water is impossible to use language alone to describe." "So much of the physical world’s process... is beyond the description of language." @drfeifei @theworldlabs

We’re partnering with government bodies and local institutions across India to accelerate discoveries in science and education. 🇮🇳 From training and mentorship for students to powering innovation hubs, we’re supporting India to apply AI where it can have the most impact. → https://goo.gle/4rrFYiy

We’ve upgraded our specialized reasoning mode Gemini 3 Deep Think to help solve modern science, research, and engineering challenges – pushing the frontier of intelligence. 🧠 Watch how the Wang Lab at Duke University is using it to design new semiconductor materials. 🧵

Thrilled to share that I have joined @AnthropicAI as a life science researcher! I am confident that Claude will do amazing things to accelerate biology. Big things ahead!

You know you are living through a discontinuity in a field when almost all of the science fiction about AI is obviously off (with a few notable exceptions) Like reading early 1900s science fiction where everything is about the canals of Mars right after Mariner actually landed

Patrick Collison on what changes when biology becomes programmable: "We, humanity, have never cured a complex disease." "Most cardiovascular disease, most cancers, most autoimmune disease, most neurodegenerative disease... For none of them can we really say that we've cured it, that we understand the causal pathways in meaningful detail." "Then over the last 10 ish years... we’ve gotten three new classes of technology in biology." "If you put those together, you now have the ability… to read, think, and to write. And this starts to really feel like a new kind of Turing loop." @patrickc with @mntruell

Addressing the India AI Action Summit today was a profound personal honor. AI can improve billions of lives and solve some of the hardest problems in science. The best outcomes of AI are not guaranteed. We must pursue AI boldly, approach it responsibly, and work together through this moment.

Arguments about what constitutes AGI have collapsed into meaningless Scholasticism and are no longer very useful, if they ever were in the first place. We didn’t learn enough about human intelligence in the times before AI to agree in any definitions at this late date.

Increasing problem with publishing work on AI is that the publication process is much slower than working paper process, so when papers finally get full peer reviews, authors are asked to account for newer papers that are built on the paper under review! No real norms around this

AI Is In Its Gentleman Science Era. AI research is wide open for builders with heretical ideas. The window won’t last forever. https://garryslist.org/posts/ai-is-in-it…

For more than a decade, Google researchers have been redefining the scientifically impossible. From flood forecasting to brain mapping to our most recent announcement of preserving the genetic code of 13 new endangered species of animals. Learn how ten years of AI innovation for genomics at @GoogleResearch are contributing to this goal. —DeepConsensus sits at the instrument level, removing sequencing errors at the source to produce more of the high-quality data needed for genome assembly. —DeepVariant finds genetic variants. For example, the University of Otago used it to analyze the genome of every living kākāpō, powering the precise breeding plan that is currently pulling the species back from extinction. —DeepPolisher helps make sure the assembled genome get 99.999%+ accuracy. Important for tasks like finding gene models that matter for disease. Learn more (and enjoy some adorable animal photos) here:

How we're helping preserve the genetic information of endangered species with AI

I love the expression “food for thought” as a concrete, mysterious cognitive capability humans experience but LLMs have no equivalent for. Definition: “something worth thinking about or considering, like a mental meal that nourishes your mind with ideas, insights, or issues that require deeper reflection. It's used for topics that challenge your perspective, offer new understanding, or make you ponder important questions, acting as intellectual stimulation.” So in LLM speak it’s a sequence of tokens such that when used as prompt for chain of thought, the samples are rewarding to attend over, via some yet undiscovered intrinsic reward function. Obsessed with what form it takes. Food for thought.

New paper from Google on accelerating science using Gemini (as opposed to its specialized research AIs). Lots of case studies and interesting advice on ways to work with these system. " We view the AI as a tireless, knowledgeable, and creative bright junior collaborator." Image Image Image

Our AI tools like DeepVariant and DeepPolisher are helping researchers sequence genomes for endangered species, compressing what once took years into just days. Genomes of 13 species are free and available for use by conservation researchers. Now @Googleorg is helping partners

The transition from “AI can’t do novel science” to “of course AI does novel science” will be like every other similar AI transition. First the over-enthusiastic claims, then smart people use AI to help them, then AI starts to do more of the work, then minor discoveries, & then…

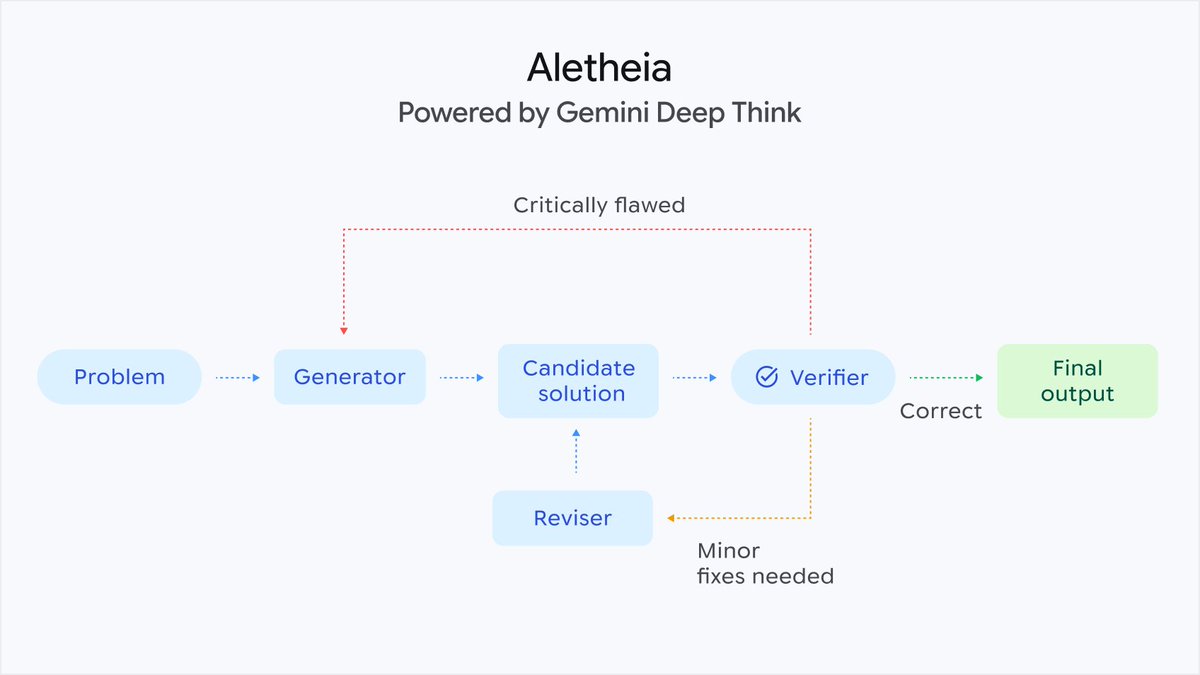

How could AI act as a better research collaborator? 🧑🔬 In two new papers with @GoogleResearch, we show how Gemini Deep Think uses agentic workflows to help solve research-level problems in mathematics, physics, and computer science. More → https://goo.gle/4aGs3Pz

.@beyondreachlabs builds deployable solar arrays that grow from the size of a dining table to a football field in orbit, unlocking massive power for next-gen spacecraft. Congrats on the launch, @fogmb and Pele! https://ycombinator.com/launches/PUY-bey…

Real question: Will AI lower human IQ?

Gemini 3 Deep Think is getting an upgrade 🧠 By blending deep scientific knowledge with advanced engineering utility, Deep Think now moves beyond abstract theory to drive practical applications. Researchers are already using it to accelerate their work in the real world: — Materials science: A university lab used Deep Think to optimize the growth of complex crystals that are candidates for high-temperature semiconductors — Mechanical engineering: A researcher demonstrated how Deep Think can be used to iterate on physical prototypes at the speed of software. When applied to things like assistive devices, this pace means faster improvements for life-changing technology (more information on this use case in the video below!) The updated Deep Think is available in @GeminiApp for Google AI Ultra subscribers.